服务器裸机搭建 k8s 集群

环境准备

- 至少3个服务器(本次使用3台阿里云服务器),4核4G以上(按量付费),内网要能互相通信,也就是必须要在同一个网段下

本次实验的3个服务器私网 ip 如下:

192.168.0.1 (主机) 192.168.0.2 192.168.0.3 这3个服务器一台为 master node(初始化主节点)、两台 work node(工作节点)

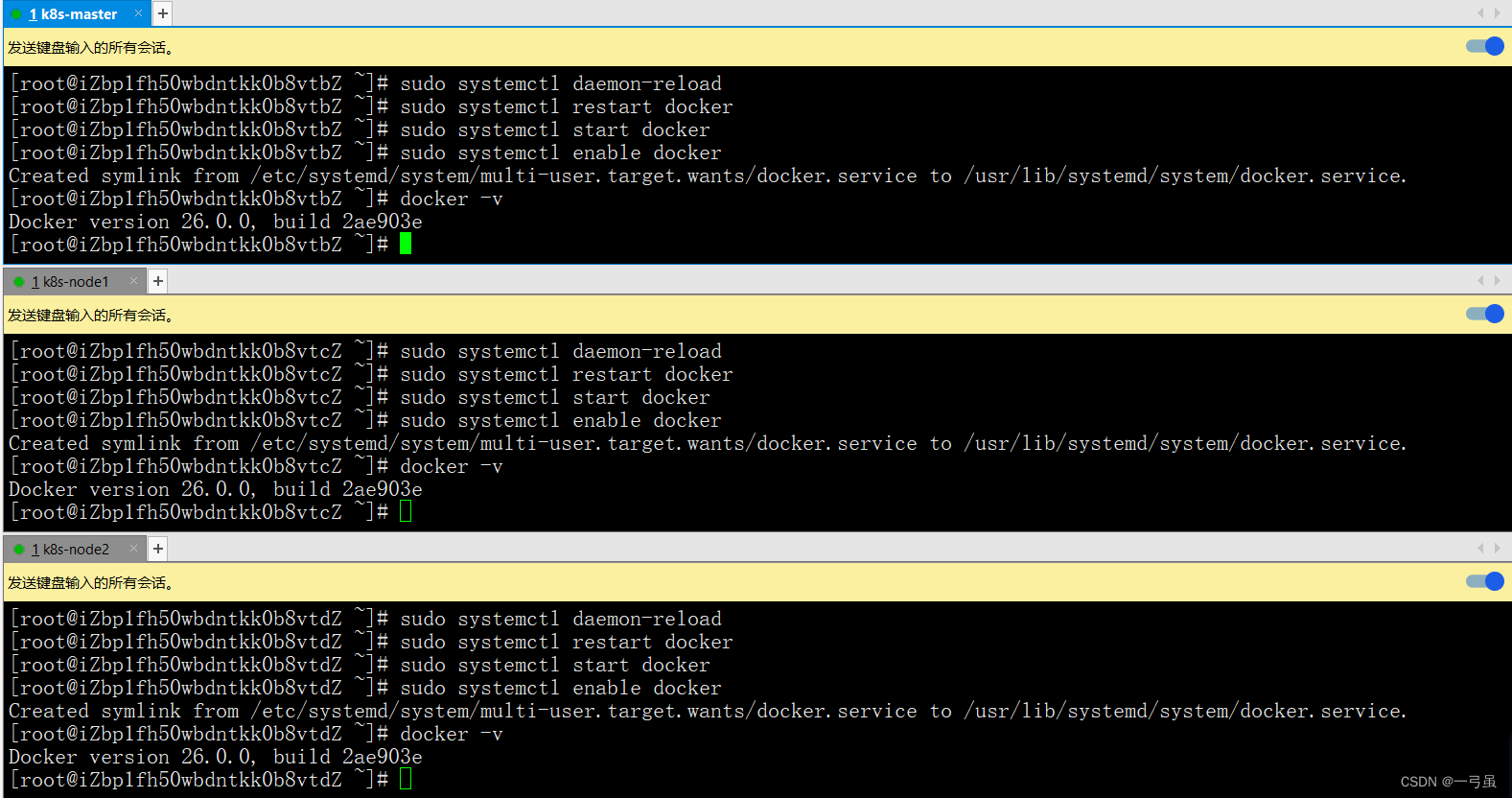

- 3个服务器都要安装 docker

3个服务器同时执行命令:

在输入命令行位置右键发送键输入到所有会话

# 移除之前安装的 docker sudo yum remove docker \ docker-client \ docker-client-latest \ docker-common \ docker-latest \ docker-latest-logrotate \ docker-logrotate \ docker-engine # 安装 gcc 环境 sudo yum install -y gcc sudo yum install -y gcc-c++ # 配置yum源 sudo yum install -y yum-utils # 使用国内的镜像。 sudo yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo # 更新 yum 索引 sudo yum makecache fast # 安装 docker sudo yum install -y docker-ce docker-ce-cli containerd.io # 配置阿里云镜像加速,在自己的阿里云镜像加速器查看 sudo mkdir -p /etc/docker sudo tee /etc/docker/daemon.json <<-'EOF' { "registry-mirrors": ["https://g8vxwvax.mirror.aliyuncs.com"] } EOF sudo systemctl daemon-reload sudo systemctl restart docker # 设置开机启动Docker sudo systemctl enable docker # 检查是否安装成功 docker -v

- 安装 k8s 前的系统环境准备,官方要求

# 节点之中不可以有重复的主机名,mac地址等,设置不同的hostname # 3个服务器分别执行 hostnamectl set-hostname k8s-master hostnamectl set-hostname k8s-node1 hostnamectl set-hostname k8s-node2 # 3台服务器全部执行 # 关闭防火墙 sudo systemctl stop firewalld sudo systemctl disable firewalld # 将 SElinux 设置为 permissive 模式,禁用 sudo setenforce 0 sudo sed -i 's/^SELINUX=enforcin$/SELINUX=permissive/' /etc/selinux/config # 关闭swap分区 sudo swapoff -a sudo sed -ri 's/.*swap.*/#&/' /etc/fstab # 允许 iptables 检查桥接流量(所有节点) cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf br_netfilter EOF cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF sudo sysctl --system 至此,我们的所有环境就配置好了,接下来就要搭建 k8s 集群了

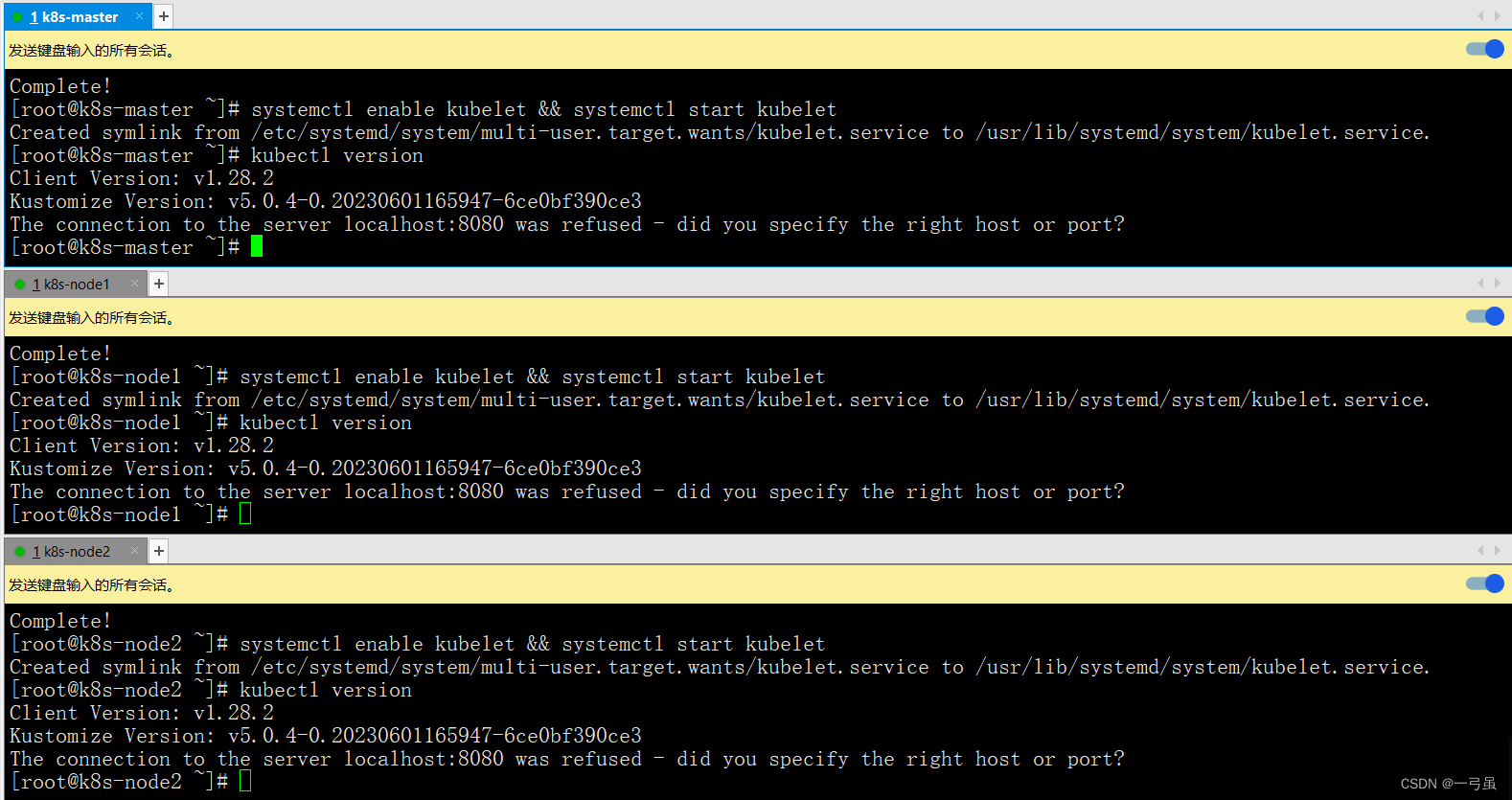

安装集群三大件 kubelet、kubeadm、kubectl

你需要在每台机器上安装以下的软件包:

kubeadm:用来初始化集群的指令。kubelet:在集群中的每个节点上用来启动 Pod 和容器等。kubectl:用来与集群通信的命令行工具。

参考阿里巴巴开源镜像站 k8s 安装步骤:https://developer.aliyun.com/mirror/kubernetes

# 3台服务器全部执行 # 安装 kubeadm、kubelet 和 kubectl,配置yum文件,因为国内无法直接访问google,这里需要将官网中的google的源改为国内源 cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF setenforce 0 yum install -y kubelet kubeadm kubectl systemctl enable kubelet && systemctl start kubelet # 查看版本信息 kubectl version

在 k8s v1.24 及更早版本中,我们使用 docker 作为容器引擎在 k8s 上使用时,依赖一个 dockershim 的内置 k8s 组件,k8s v1.24发行版中将dockershim 组件给移除了,取而代之的就是 cri-dockerd(当然还有其它容器接口),简单讲 CRI 就是容器运行时接口(Container Runtime Interface,CRI),也就是说 cri-dockerd 就是以 docker 作为容器引擎而提供的容器运行时接口,如果我们想要用 docker 作为 k8s 的容器运行引擎,我们需要先部署好 cri-dockerd,用 cri-dockerd 来与 kubelet 交互,然后再由 cri-dockerd 和 docker api 交互,使我们在 k8s 能够正常使用 docker 作为容器引擎。

# 下载 wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.4/cri-dockerd-0.3.4.amd64.tgz # 解压cri-docker tar xvf cri-dockerd-*.amd64.tgz cp -r cri-dockerd/ /usr/bin/ chmod +x /usr/bin/cri-dockerd/cri-dockerd # 写入启动cri-docker配置文件 cat > /usr/lib/systemd/system/cri-docker.service <<EOF [Unit] Description=CRI Interface for Docker Application Container Engine Documentation=https://docs.mirantis.com After=network-online.target firewalld.service docker.service Wants=network-online.target Requires=cri-docker.socket [Service] Type=notify ExecStart=/usr/bin/cri-dockerd/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.7 ExecReload=/bin/kill -s HUP $MAINPID TimeoutSec=0 RestartSec=2 Restart=always StartLimitBurst=3 StartLimitInterval=60s LimitNOFILE=infinity LimitNPROC=infinity LimitCORE=infinity TasksMax=infinity Delegate=yes KillMode=process [Install] WantedBy=multi-user.target EOF # 写入cri-docker的socket配置文件 cat > /usr/lib/systemd/system/cri-docker.socket <<EOF [Unit] Description=CRI Docker Socket for the API PartOf=cri-docker.service [Socket] ListenStream=%t/cri-dockerd.sock SocketMode=0660 SocketUser=root SocketGroup=docker [Install] WantedBy=sockets.target EOF # 当你新增或修改了某个单位文件(如.service文件、.socket文件等),需要运行该命令来刷新systemd对该文件的配置。 systemctl daemon-reload # 确保docker是启动的 # 启用并立即启动cri-docker.service单元。 systemctl enable --now cri-docker.service # 显示docker.service单元的当前状态,包括运行状态、是否启用等信息。 systemctl status cri-docker.service 出现以下内容说明启动成功:

[root@k8s-master ~]# systemctl status cri-docker.service ● cri-docker.service - CRI Interface for Docker Application Container Engine Loaded: loaded (/usr/lib/systemd/system/cri-docker.service; enabled; vendor preset: disabled) Active: active (running) since Sun 2024-03-24 16:01:06 CST; 9s ago Docs: https://docs.mirantis.com Main PID: 3238 (cri-dockerd) Tasks: 9 Memory: 14.5M CGroup: /system.slice/cri-docker.service └─3238 /usr/bin/cri-dockerd/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/googl... Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Start docker client with r...ut 0s" Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Hairpin mode is set to none" Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Loaded network plugin cni" Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Docker cri networking mana...n cni" Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Docker Info: &{ID:a848d1ba... [Nati Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Setting cgroupDriver cgroupfs" Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Docker cri received runtim...:,},}" Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Starting the GRPC backend ...face." Mar 24 16:01:06 k8s-master cri-dockerd[3238]: time="2024-03-24T16:01:06+08:00" level=info msg="Start cri-dockerd grpc backend" Mar 24 16:01:06 k8s-master systemd[1]: Started CRI Interface for Docker Application Container Engine. Hint: Some lines were ellipsized, use -l to show in full. 总结:环境准备

- 机器环境

- 安装了 k8s 的组件

- 安装 cri 环境

安装并初始化 master 节点

# 所有机器添加master节点的域名映射,这里要改为自己当下master的ip echo "192.168.0.1 cluster-master" >> /etc/hosts # node节点ping测试映射是否成功 ping cluster-master # 如果init失败,可以kubeadm重置 kubeadm reset --cri-socket unix:///var/run/cri-dockerd.sock ####### 主节点初始化(只在master执行) ####### # 注意修改apiserver的地址为master节点的ip ## 注意service、pod的网络节点不能和master网络ip重叠 kubeadm init \ --apiserver-advertise-address=192.168.0.1 \ --control-plane-endpoint=cluster-master \ --image-repository registry.cn-hangzhou.aliyuncs.com/google_containers \ --kubernetes-version v1.28.2 \ --service-cidr=10.96.0.0/16 \ --pod-network-cidr=192.169.0.0/16 \ --cri-socket unix:///var/run/cri-dockerd.sock 等待命令运行完毕即可,执行成功后可以看到

[root@k8s-master ~]# kubeadm init \ > --apiserver-advertise-address=192.168.0.1 \ > --control-plane-endpoint=cluster-master \ > --image-repository registry.cn-hangzhou.aliyuncs.com/google_containers \ > --kubernetes-version v1.28.2 \ > --service-cidr=10.96.0.0/16 \ > --pod-network-cidr=192.169.0.0/16 \ > --cri-socket unix:///var/run/cri-dockerd.sock [init] Using Kubernetes version: v1.28.2 ...... [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of control-plane nodes by copying certificate authorities and service account keys on each node and then running the following as root: kubeadm join cluster-master:6443 --token 9jk1zp.v738zd885ew7m7lp \ --discovery-token-ca-cert-hash sha256:6a1cb9a74d02f28b06471114e28faa8de6cbc3501eb3a9a989123840c281e85a \ --control-plane Then you can join any number of worker nodes by running the following on each as root: kubeadm join cluster-master:6443 --token 9jk1zp.v738zd885ew7m7lp \ --discovery-token-ca-cert-hash sha256:6a1cb9a74d02f28b06471114e28faa8de6cbc3501eb3a9a989123840c281e85a 根据上面的提示信息,我们在 master 中执行以下命令:

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config export KUBECONFIG=/etc/kubernetes/admin.conf 初始化完成后,可以使用命令查看节点信息了

kubectl get nodes # 发现是 NotReady 状态 [root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master NotReady control-plane 5m47s v1.28.2 work 节点加入集群

加入节点命令,此命令的参数是在初始化完成后给出的,每个人的都不一样,需要复制自己生成的。

是上面初始化 master 节点执行后信息的最后几行,查看自己生成的

Then you can join any number of worker nodes by running the following on each as root: kubeadm join cluster-master:6443 --token 9jk1zp.v738zd885ew7m7lp \ --discovery-token-ca-cert-hash sha256:6a1cb9a74d02f28b06471114e28faa8de6cbc3501eb3a9a989123840c281e85a 根据自己生成的信息执行以下命令:

kubeadm join cluster-master:6443 --token 9jk1zp.v738zd885ew7m7lp \ --discovery-token-ca-cert-hash sha256:6a1cb9a74d02f28b06471114e28faa8de6cbc3501eb3a9a989123840c281e85a \ --cri-socket unix:///var/run/cri-dockerd.sock # 需要注意的是,如果由于当前版本不再默认支持docker,如果服务器使用的docker,需要在命令后面加入参数--cri-socket unix:///var/run/cri-dockerd.sock。 执行完成后,在 master 主机上查看节点信息

[root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master NotReady control-plane 11m v1.28.2 k8s-node1 NotReady <none> 13s v1.28.2 k8s-node2 NotReady <none> 7s v1.28.2 #另外token默认的有效期为24小时,过期之后就不能用了,需要重新创建token,操作如下 kubeadm token create --print-join-command # 另外,短时间内生成多个token时,生成新token后建议删除前一个旧的。 # 查看命令 kubeadm token list # 删除命令 kubeadm token delete tokenid 部署 calico

我们需要部署一个 pod 网络插件,安装 Pod 网络是 Pod 之间进行通信的必要条件,k8s 支持众多网络方案,这里选用 calico 方案。

文档:https://kubernetes.io/docs/concepts/cluster-administration/addons/

calico 历史版本地址:https://docs.tigera.io/archive#v3.1.7

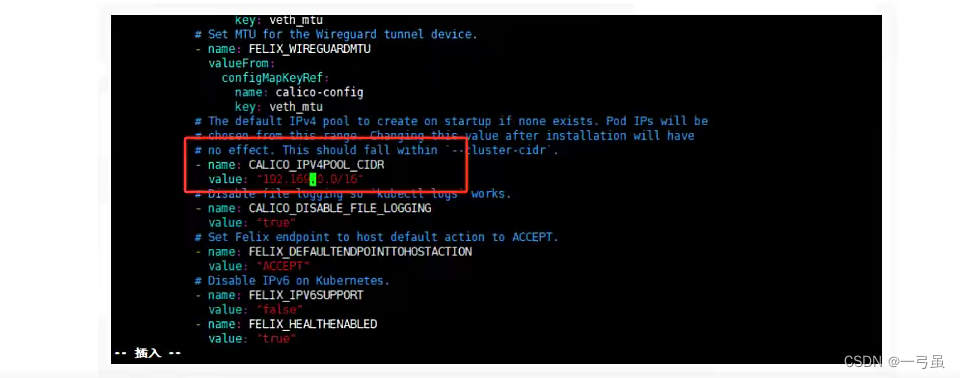

# 下载calico.yml curl https://docs.tigera.io/archive/v3.25/manifests/calico.yaml -O 我们 initmaster 上面配置的 --pod-network-cidr=192.169.0.0/16,这里面也要对应修改

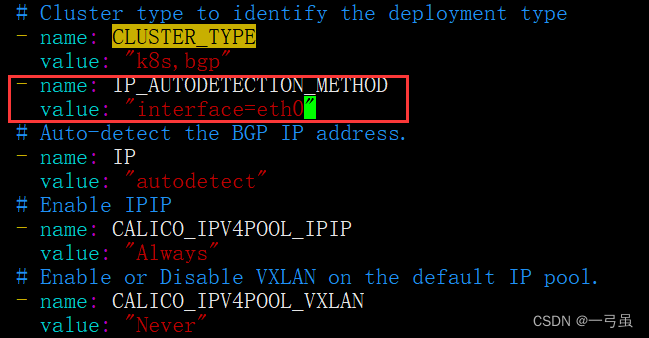

可能存在的问题:calico 默认会找 eth0 网卡,如果当前机器网卡不是这个名字,可能会无法启动,需要手动配置以下。

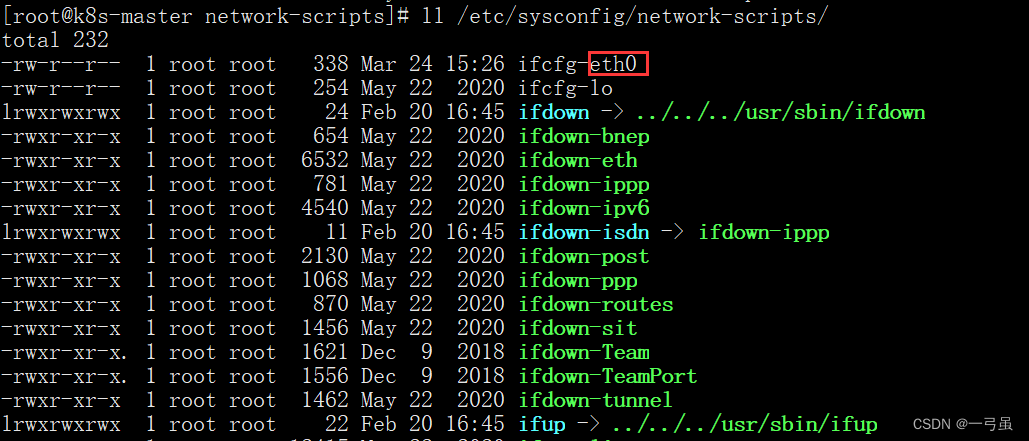

使用以下命令查找:

ll /etc/sysconfig/network-scripts/

框起来的就是网卡名字,我这里是 eth0。如果是用虚拟机搭建的,网卡名字可能不同

如果网卡名字不是 eth0,需要编辑calico.yaml配置文件加入如下内容 ,在 CLUSTER_TYPE 同级目录下

- name: IP_AUTODETECTION_METHOD value: "interface=eth0" # 改成你自己的网卡名字 网卡名字为 eth0 的则不需要配置

修改完配置后,我们应用一下,应用完毕之后需要等待一下。

kubectl apply -f calico.yaml [root@k8s-master ~]# kubectl apply -f calico.yaml poddisruptionbudget.policy/calico-kube-controllers created serviceaccount/calico-kube-controllers created serviceaccount/calico-node created configmap/calico-config created customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created clusterrole.rbac.authorization.k8s.io/calico-node created clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created clusterrolebinding.rbac.authorization.k8s.io/calico-node created daemonset.apps/calico-node created deployment.apps/calico-kube-controllers created 等待一会以后,发现我们的节点状态变为了 Ready ,就OK了

[root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready control-plane 40m v1.28.2 k8s-node1 Ready <none> 29m v1.28.2 k8s-node2 Ready <none> 29m v1.28.2 查看所有的 pod

kubectl get pod -A [root@k8s-master ~]# kubectl get pod -A NAMESPACE NAME READY STATUS RESTARTS AGE kube-system calico-kube-controllers-658d97c59c-wzj97 1/1 Running 0 9m18s kube-system calico-node-f76hr 1/1 Running 0 2m10s kube-system calico-node-llncs 1/1 Running 0 2m kube-system calico-node-lwqjh 1/1 Running 0 109s kube-system coredns-6554b8b87f-pbmbq 1/1 Running 0 42m kube-system coredns-6554b8b87f-pgrq2 1/1 Running 0 42m kube-system etcd-k8s-master 1/1 Running 0 42m kube-system kube-apiserver-k8s-master 1/1 Running 0 42m kube-system kube-controller-manager-k8s-master 1/1 Running 0 42m kube-system kube-proxy-58b62 1/1 Running 0 31m kube-system kube-proxy-b6x29 1/1 Running 0 42m kube-system kube-proxy-jxct4 1/1 Running 0 31m kube-system kube-scheduler-k8s-master 1/1 Running 0 42m 测试 k8s 的自愈能力

我们将一个节点关机重启,reboot。

将 k8s-node2 关机:

[root@k8s-node2 ~]# poweroff Connection closing...Socket close. Connection closed by foreign host. Disconnected from remote host(k8s-node2) at 17:02:36. Type `help' to learn how to use Xshell prompt. 在 master 主机查看节点状态:

[root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready control-plane 49m v1.28.2 k8s-node1 Ready <none> 37m v1.28.2 k8s-node2 NotReady <none> 37m v1.28.2 发现 k8s-node2 已经是 NotReady 了,这时我们启动 k8s-node2 这台主机

等待一会,重新在 master 主机查看节点状态

[root@k8s-master ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master Ready control-plane 51m v1.28.2 k8s-node1 Ready <none> 40m v1.28.2 k8s-node2 Ready <none> 39m v1.28.2 发现 k8s-node2 又自动加入到集群了

k8s 的自愈能力非常强大,主要包括以下几个方面:

自动重启:k8s 监控容器的状态,一旦发现容器崩溃或异常退出,会自动重启容器,确保应用持续可用。

自动扩缩容:k8s 基于资源利用率和负载情况,可以自动扩展或缩减容器副本的数量,以满足应用程序的需求。通过水平扩展和自动负载均衡,k8s可以动态调整容器副本的数量,提高应用程序的可伸缩性和性能。

自动容错和故障迁移:k8s 提供了容器的健康检查机制,可以监控容器的状态,并在容器不健康或不可访问时自动将其从集群中删除,以避免影响其他容器的正常运行。同时,k8s 还支持故障迁移,将故障容器重新部署到其他可用的节点上,以确保应用程序的高可用性。

自动滚动升级:k8s 支持滚动升级应用程序,可以在不中断服务的情况下逐步更新容器版本。通过逐步替换容器副本,k8s 实现了应用程序的平滑升级,降低了升级过程中的风险。

自动恢复:k8s 可以监控节点的健康状态,一旦发现节点故障或不可访问,会自动将容器迁移到其他可用节点上,实现容器的自动恢复。

总的来说,k8s 具有自动管理和调度容器的能力,可以监控容器的状态、自动重启容器、自动扩缩容、自动容错和故障迁移、自动滚动升级等,提供了强大的自愈能力,确保应用程序的高可用性、可伸缩性和稳定性。

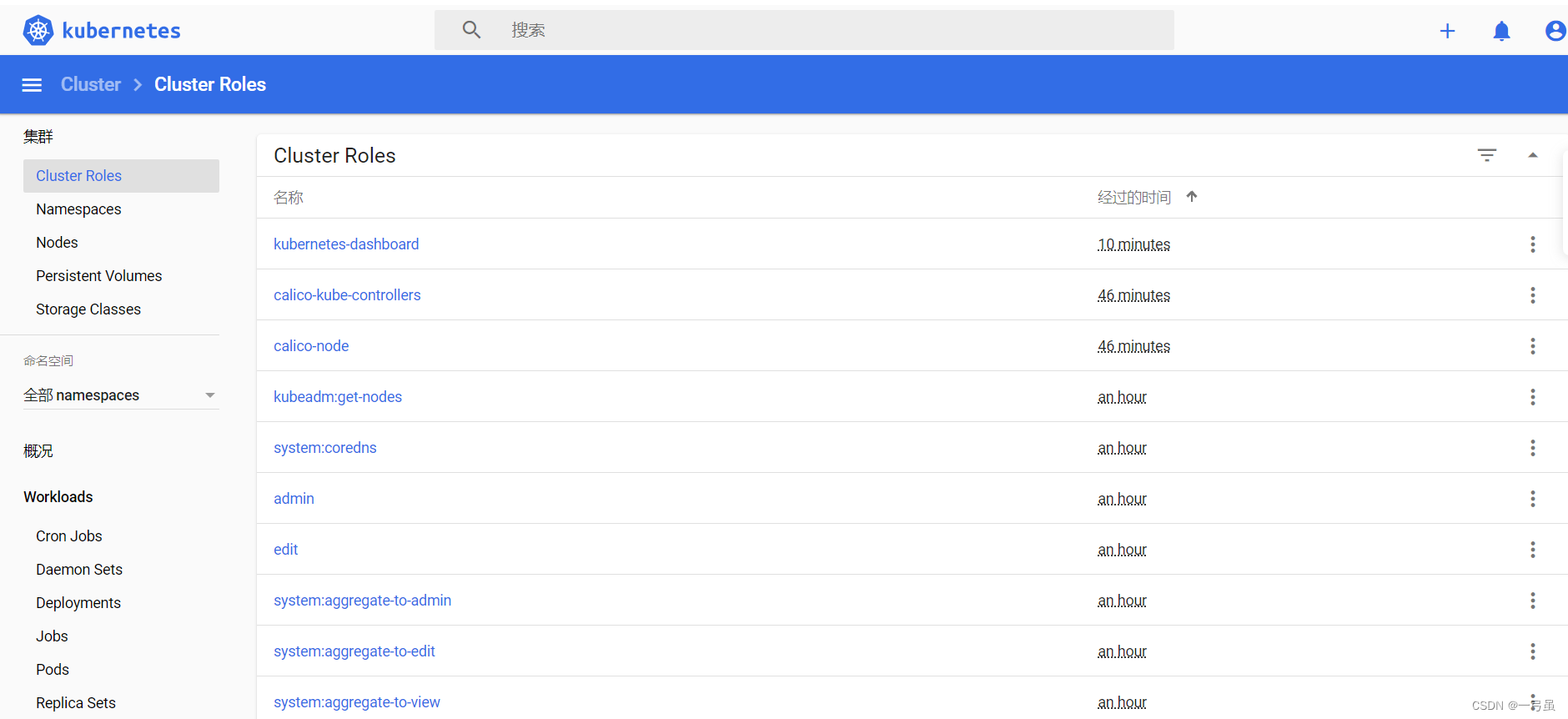

安装 k8s Dashboard 可视化界面

项目地址:https://github.com/kubernetes/dashboard

编辑文件recommended.yaml:

apiVersion: v1 kind: Namespace metadata: name: kubernetes-dashboard --- apiVersion: v1 kind: ServiceAccount metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard --- apiVersion: v1 kind: ServiceAccount metadata: name: dashboard-admin namespace: kubernetes-dashboard --- kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: type: NodePort ports: - port: 443 targetPort: 8443 nodePort: 31443 selector: k8s-app: kubernetes-dashboard --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-certs namespace: kubernetes-dashboard type: Opaque --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-csrf namespace: kubernetes-dashboard type: Opaque data: csrf: "" --- apiVersion: v1 kind: Secret metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-key-holder namespace: kubernetes-dashboard type: Opaque --- kind: ConfigMap apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard-settings namespace: kubernetes-dashboard --- kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard rules: - apiGroups: [""] resources: ["secrets"] resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"] verbs: ["get", "update", "delete"] - apiGroups: [""] resources: ["configmaps"] resourceNames: ["kubernetes-dashboard-settings"] verbs: ["get", "update"] - apiGroups: [""] resources: ["services"] resourceNames: ["heapster", "dashboard-metrics-scraper"] verbs: ["proxy"] - apiGroups: [""] resources: ["services/proxy"] resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"] verbs: ["get"] --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard rules: - apiGroups: ["metrics.k8s.io"] resources: ["pods", "nodes"] verbs: ["get", "list", "watch"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: kubernetes-dashboard roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: kubernetes-dashboard subjects: - kind: ServiceAccount name: kubernetes-dashboard namespace: kubernetes-dashboard --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: dashboard-admin roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: dashboard-admin namespace: kubernetes-dashboard --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: kubernetes-dashboard template: metadata: labels: k8s-app: kubernetes-dashboard spec: containers: - name: kubernetes-dashboard image: kubernetesui/dashboard:v2.0.0-rc7 imagePullPolicy: Always ports: - containerPort: 8443 protocol: TCP args: - --auto-generate-certificates - --namespace=kubernetes-dashboard volumeMounts: - name: kubernetes-dashboard-certs mountPath: /certs - mountPath: /tmp name: tmp-volume livenessProbe: httpGet: scheme: HTTPS path: / port: 8443 initialDelaySeconds: 30 timeoutSeconds: 30 securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 volumes: - name: kubernetes-dashboard-certs secret: secretName: kubernetes-dashboard-certs - name: tmp-volume emptyDir: {} serviceAccountName: kubernetes-dashboard nodeSelector: "beta.kubernetes.io/os": linux tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule --- kind: Service apiVersion: v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: ports: - port: 8000 targetPort: 8000 selector: k8s-app: dashboard-metrics-scraper --- kind: Deployment apiVersion: apps/v1 metadata: labels: k8s-app: dashboard-metrics-scraper name: dashboard-metrics-scraper namespace: kubernetes-dashboard spec: replicas: 1 revisionHistoryLimit: 10 selector: matchLabels: k8s-app: dashboard-metrics-scraper template: metadata: labels: k8s-app: dashboard-metrics-scraper annotations: seccomp.security.alpha.kubernetes.io/pod: 'runtime/default' spec: containers: - name: dashboard-metrics-scraper image: kubernetesui/metrics-scraper:v1.0.4 ports: - containerPort: 8000 protocol: TCP livenessProbe: httpGet: scheme: HTTP path: / port: 8000 initialDelaySeconds: 30 timeoutSeconds: 30 volumeMounts: - mountPath: /tmp name: tmp-volume securityContext: allowPrivilegeEscalation: false readOnlyRootFilesystem: true runAsUser: 1001 runAsGroup: 2001 serviceAccountName: kubernetes-dashboard nodeSelector: "beta.kubernetes.io/os": linux tolerations: - key: node-role.kubernetes.io/master effect: NoSchedule volumes: - name: tmp-volume emptyDir: {} 编辑完成后执行应用:

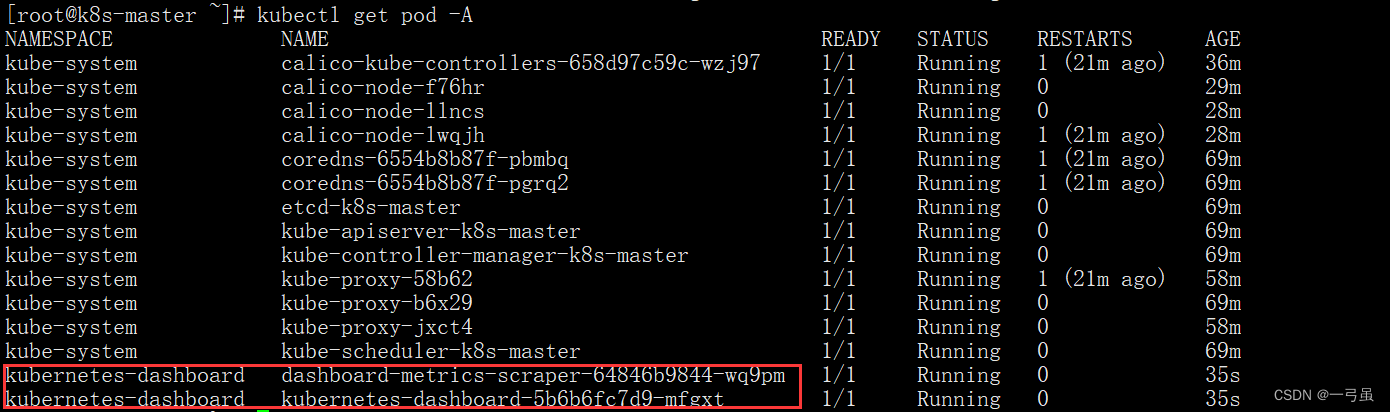

kubectl apply -f recommended.yaml 执行完成后,查看所有 pod

看到这两个成功启动即可

执行下面命令:

# 当前默认命名空间下的服务 -A 全部命名空间下的服务 kubectl get svc -A [root@k8s-master ~]# kubectl get svc -A NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE default kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 70m kube-system kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 70m kubernetes-dashboard dashboard-metrics-scraper ClusterIP 10.96.12.26 <none> 8000/TCP 100s kubernetes-dashboard kubernetes-dashboard NodePort 10.96.157.187 <none> 443:31443/TCP 100s 可以看到,访问端口是31443,协议是443,也就是 https

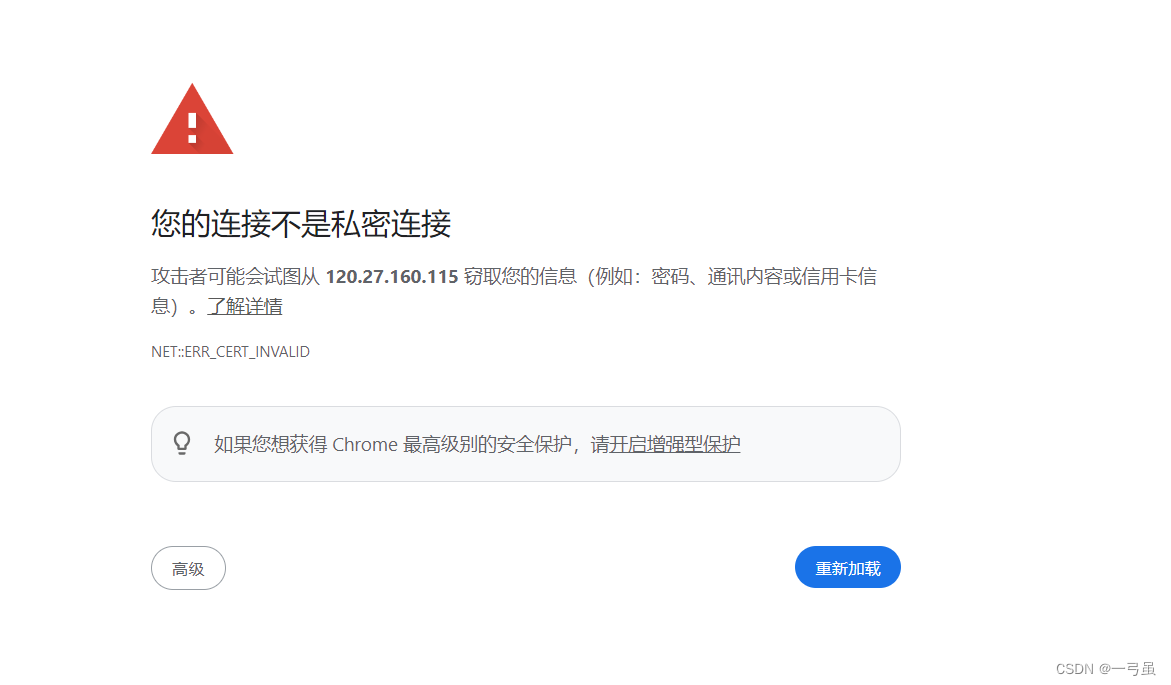

打开浏览器,访问集群任意节点(ip 为3个服务器任意一个即可)即可以进入控制面板。

这里测试我们使用 chrome 浏览器

由于浏览器 chrome 使用 https 不安全,在这个页面凭空输入:thisisunsafe,即可进入

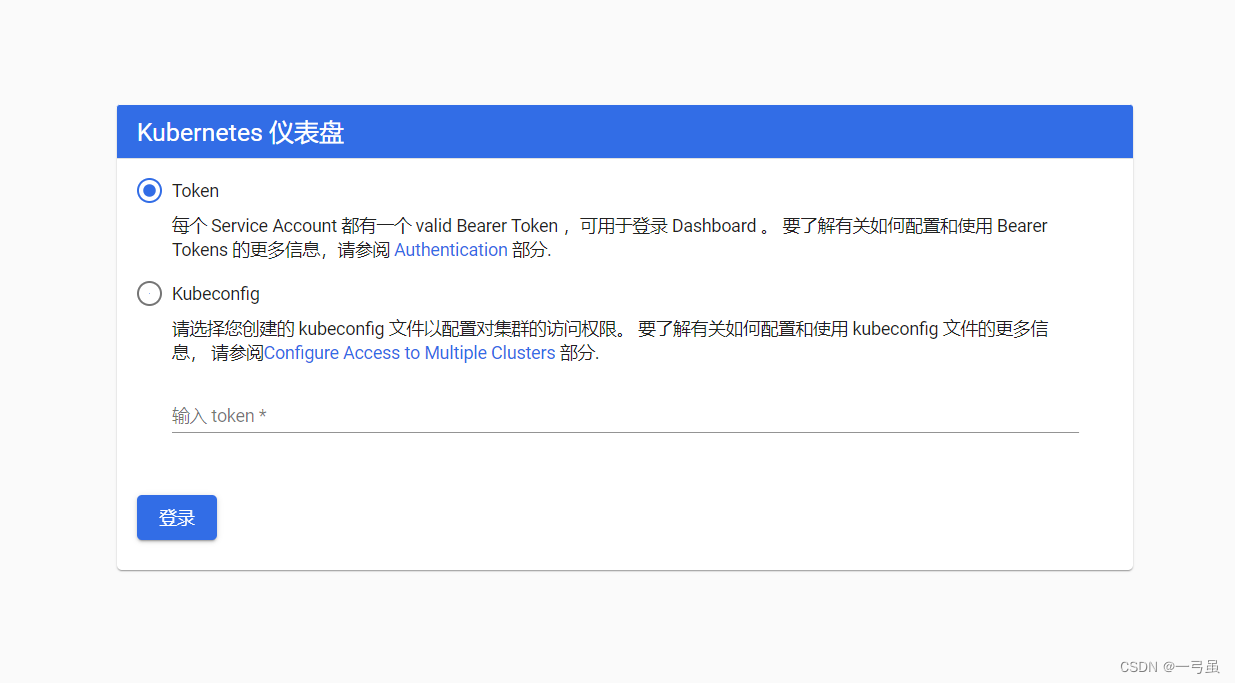

由于登录需要 token,接下来我们创建一个 token

编辑文件dash-token.yaml

kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: admin annotations: rbac.authorization.kubernetes.io/autoupdate: "true" roleRef: kind: ClusterRole name: cluster-admin apiGroup: rbac.authorization.k8s.io subjects: - kind: ServiceAccount name: admin namespace: kubernetes-dashboard --- apiVersion: v1 kind: ServiceAccount metadata: name: admin namespace: kubernetes-dashboard labels: kubernetes.io/cluster-service: "true" addonmanager.kubernetes.io/mode: Reconcile 执行应用这个文件,获取令牌:

kubectl apply -f dash-token.yaml kubectl create token admin --namespace kubernetes-dashboard [root@k8s-master ~]# kubectl apply -f dash-token.yaml clusterrolebinding.rbac.authorization.k8s.io/admin created serviceaccount/admin created [root@k8s-master ~]# kubectl create token admin --namespace kubernetes-dashboard eyJhbGciOiJSUzI1NiIsImtpZCI6IkV6MDJ6S1NSMDlvTmJKRGc4WWJ5RUk1aW5MOUxRMUFmNFk3M1BMWmVGWTQifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNzExMjc2MzY5LCJpYXQiOjE3MTEyNzI3NjksImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJhZG1pbiIsInVpZCI6ImY4NjNhZjk5LTczMWEtNDlkZi04ODhhLWU3MDBlMGNhZWQ2OSJ9fSwibmJmIjoxNzExMjcyNzY5LCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4ifQ.CwVQxLVq6fKRacovFHCQat20Xz-M1OjLCZnKM_ERHa87UchqD6aRYSoZG-oFvW2TVGLvRIwa3ViNNVMWtFMEUwy0Zzg_nM6SdqWc-fvvfWLabA_Deqi0gANlcCcUW6lLlm37iQ9nUrsfRK6LLFlow9J_wkOnB6ZmzSQcNltEbBk5SL4-Zf0goOdycLI79p8xFM26TVg5U-2eILCGBLnVAUMHpADBvkmaKSR3ix1VLFk2g6aPV89ySmt4dTwuXS--bEGW7EY1hF3-8Z_c93x9yP7p7sgdvzKYcwTmeE4ML8M3VGVoK_Nnv5-MBmyDFfDoRSaUdtJBcLiIEUXzkJuhBQ 拿到 token 去登录即可

可以在 dashboard 界面查看自己 k8s 集群的所有信息