阅读量:0

创建环境

# 创建环境 conda create -n demo python=3.10 -y # 激活环境 conda activate demo # 安装 torch conda install pytorch==2.1.2 torchvision==0.16.2 torchaudio==2.1.2 pytorch-cuda=12.1 -c pytorch -c nvidia -y # 安装其他依赖 pip install transformers==4.38 pip install sentencepiece==0.1.99 pip install einops==0.8.0 pip install protobuf==5.27.2 pip install accelerate==0.33.0 pip install streamlit==1.37.0 代码:

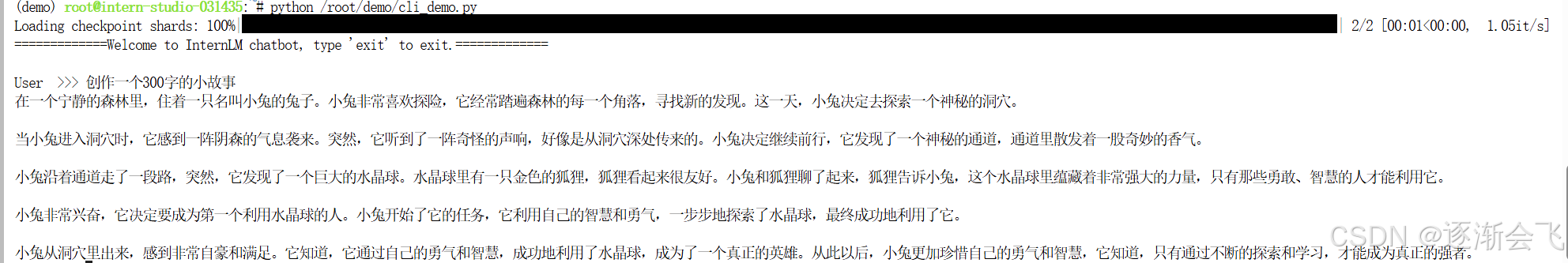

import torch from transformers import AutoTokenizer, AutoModelForCausalLM # 指定模型的路径 model_name_or_path = "/root/share/new_models/Shanghai_AI_Laboratory/internlm2-chat-1_8b" # 加载预训练的分词器,设置使用 CUDA 设备0 tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, trust_remote_code=True, device_map='cuda:0') # 加载预训练的模型,设置使用 CUDA 设备0,并指定模型的数据类型为 bfloat16 model = AutoModelForCausalLM.from_pretrained(model_name_or_path, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map='cuda:0') model = model.eval() # 将模型设置为评估模式 # 定义系统启动时的提示信息 system_prompt = """You are an AI assistant whose name is InternLM (书生·浦语). - InternLM (书生·浦语) is a conversational language model that is developed by Shanghai AI Laboratory (上海人工智能实验室). It is designed to be helpful, honest, and harmless. - InternLM (书生·浦语) can understand and communicate fluently in the language chosen by the user such as English and 中文. """ messages = [(system_prompt, '')] # 初始化对话记录列表 print("=============Welcome to InternLM chatbot, type 'exit' to exit.=============") # 主循环开始 while True: input_text = input("\nUser >>> ") # 获取用户输入 input_text = input_text.replace(' ', '') # 去除输入中的空格 if input_text == "exit": # 如果输入为 "exit",则退出程序 break length = 0 for response, _ in model.stream_chat(tokenizer, input_text, messages): if response is not None: print(response[length:], flush=True, end="") # 输出模型的回应 length = len(response) 结果: