阅读量:0

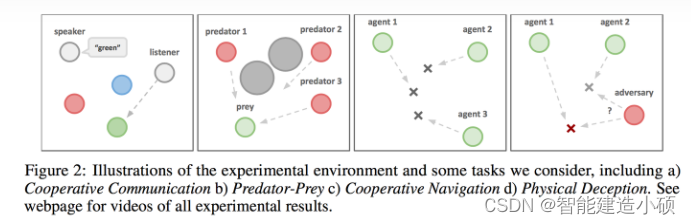

多智能体深度确定性策略梯度(Multi-Agent Deep Deterministic Policy Gradient, MADDPG)算法是一种在多智能体环境中使用的强化学习算法。这种算法是基于深度确定性策略梯度(DDPG)算法的扩展。MADDPG主要用于解决多智能体环境中的协作和竞争问题,特别是在智能体之间的交互可能非常复杂的情况下。下面将详细介绍MADDPG算法的核心概念和工作原理。

一、基础理论

在介绍MADDPG之前,需要理解其基础——DDPG算法。DDPG是一种结合了深度学习和强化学习的算法,用于连续动作空间的问题。DDPG使用了策略梯度方法和Q学习(一种值函数近似方法)的结合,通过学习一个确定性策略来解决复杂的决策问题。

MADDPG的核心思想

MADDPG考虑了多智能体环境的动态性和复杂性。在多智能体环境中,每个智能体的行为不仅取决于环境的状态,还受到其他智能体策略的影响。MADDPG通过对每个智能体采用一个独立的Actor-Critic架构,并在训练过程中考虑其他智能体的策略信息,来改善学习效果和稳定性。

算法细节

- Actor-Critic架构:每个智能体都有一个Actor网络用于输出动作,以及一个Critic网络用于评估当前策略的好坏。Actor直接学习确定性策略,而Critic负责估算状态-动作对的Q值。

- 集中式训练,分布式执行:在训练阶段,Critic网络可以访问所有智能体的信息,包括状态和动作,这允许它准确评估每个动作的期望回报。然而,在执行阶段,每个智能体的Actor网络只能基于自己的局部观察来做出决策。

- 经验回放:为了提高训练的稳定性和效率,MADDPG使用了经验回放机制。智能体的每次交互会被存储在一个回放缓冲区中,训练时会从这个缓冲区中随机抽取一批经验来更新网络。

- 目标网络:为了进一步稳定训练过程,MADDPG为每个Actor和Critic网络维护了一个目标网络。这些目标网络的参数会缓慢跟踪对应网络的参数,用于计算期望回报的稳定目标。

- 奖励和惩罚:MADDPG允许设计复杂的奖励机制,包括对合作行为的奖励和对对立行为的惩罚,来引导智能体学习如何在多种交互场景中作出最优决策。

伪代码

初始化: 对于每个智能体i: 初始化actor网络π_i和critic网络Q_i,以及它们的目标网络π'_i和Q'_i。 初始化经验回放缓冲区D。 重复(对于每个episode): 初始化环境状态S 重复(对于每个时间步): 对于每个智能体i: 根据当前策略π_i和状态S观察o_i,选择动作a_i 执行所有智能体的动作[a_1, ..., a_N],观察新状态S'和奖励R 对于每个智能体i,将转换(t = (o_i, a_i, R_i, o'_i))存储到D中 对于每个智能体i: 从D中随机采样一批转换 对于每个采样的转换: 使用目标网络计算目标Q值 更新critic网络Q_i,最小化损失:L = (Q_i(o_i, a_i) - 目标Q)^2 更新actor网络π_i,使用策略梯度 对于每个智能体i: 软更新目标网络参数:π'_i ← τπ_i + (1 - τ)π'_i Q'_i ← τQ_i + (1 - τ)Q'_i 直到环境结束本episode 重复,直到满足终止条件 与其他强化学习算法的不用点

MADDPG(多智能体深度确定性策略梯度)算法是多智能体强化学习领域的一个重要算法,它针对的是连续动作空间问题,并且特别适用于环境中存在多个智能体互动(合作、竞争或两者兼有)的情况。以下是MADDPG与其他几种多智能体强化学习算法的比较:

1. DDPG(深度确定性策略梯度)

- 相同点:MADDPG基于DDPG算法,都采用了Actor-Critic架构,利用深度学习技术处理高维状态和动作空间,并通过目标网络和经验回放提高学习稳定性。

- 不同点:DDPG是为单一智能体设计的,而MADDPG扩展了DDPG,使其适用于多智能体环境。在MADDPG中,每个智能体都有自己的Actor和Critic网络,Critic在训练时能够访问所有智能体的信息,这有助于在存在其他智能体的环境中做出更好的决策。

2. Q-Learning和DQN(深度Q网络)

- 相同点:这些算法都属于值基础的强化学习方法,通过学习一个值函数来间接地确定最优策略。

- 不同点:Q-Learning和DQN主要针对离散动作空间设计,而MADDPG处理连续动作空间。DQN通过引入深度学习来处理高维状态空间,但它适用于单一智能体。MADDPG则专门解决多智能体环境中的问题,允许智能体在训练时考虑其他智能体的策略,更适用于复杂的交互场景。

3. MARL算法中的其他方法,如VDN(值分解网络)和QMIX

- VDN和QMIX:这些算法专注于如何在多智能体设置中结合或分解智能体的值函数,以便学习到高效的协作策略。

- 相同点:MADDPG、VDN和QMIX都旨在处理多智能体环境中的问题,强调智能体间的协作或竞争。

- 不同点:VDN和QMIX采用的是值分解的方式来解决多智能体的协作问题,适用于离散动作空间。这些方法通过分解全局值函数来简化学习过程。而MADDPG采用的是策略梯度方法,并直接在连续动作空间中工作,更适合需要精确控制的应用场景。

应用场景

MADDPG适用于各种多智能体场景,包括但不限于:

- 合作控制任务,如多无人机编队飞行。

- 竞争游戏,例如多玩家在线游戏中的对抗。

- 混合动作环境,其中智能体需要同时考虑合作和竞争行为。

二、代码实现

环境介绍:simple_adversary_v3

这是一个合作与竞争的环境。

环境基本信息

- 种类:两种(友方,敌方)

- 参数:位置坐标(pos),速度(vel),传递信息(c)。 (有个参数叫

agent.silent,等于True就是没有信息传递(保持安静)) - 环境中的实体(landmark)

- 参数:位置坐标,速度(有的环境地表会移动,但这个环境都是静止的)

智能体观测信息:(observation)

if agent.adversary == True:一个numpy.array,[地标距离自己的距离(4),其他智能体距自己的距离(4)]if agent.adversary == False:一个numpy.array,[智能体自己的坐标(2),智能体距目标的相对距离(2),地标距离自己的距离(2),其他智能体距自己的距离(4)]

奖励函数

- bad:自己距离目标的距离。

- good:可以由两个因素决定,友方距离目标的距离,越近越好;敌方距离目标的距离,越远越好。

main.py

""" #!/usr/bin/env python # -*- coding:utf-8 -*- @Project : MADDPG """ from pettingzoo.mpe import simple_adversary_v3 import numpy as np import torch import torch.nn as nn import os import time from maddpg_agent import Agent torch.autograd.set_detect_anomaly(True) device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") print(f"Using device:{device}") def multi_obs_to_state(multi_obs): state= np.array([]) for agent_obs in multi_obs.values(): state = np.concatenate([state, agent_obs]) return state NUM_EPISODE = 1000 NUM_STEP = 100 MEMORY_SIZE = 10000 BATCH_SIZE = 512 TARGET_UPDATE_INTERVAL = 200 LR_ACTOR = 0.001 LR_CRITIC = 0.001 HIDDEN_DIM = 64 GAMMA = 0.99 TAU = 0.01 scenario = "simple_adversary_v3" current_path = os.path.dirname(os.path.realpath(__file__)) agent_path = current_path + "\\" +"models"+ "\\" + scenario + "\\" timestamp = time.strftime("%Y%m%d%H%M%S") # 1. initialize the agent # 初始化环境 env = simple_adversary_v3.parallel_env(N=2, max_cycles= NUM_STEP, continuous_actions= True) multi_obs, infos = env.reset() NUM_AGENT = env.num_agents agent_name_list = env.agents # 1.1 get obs_dim obs_dim = [] for agent_obs in multi_obs.values(): obs_dim.append(agent_obs.shape[0]) state_dim = sum(obs_dim) # 1.2 get action_dim action_dim = [] for agent_name in agent_name_list: action_dim.append(env.action_space(agent_name).sample().shape[0]) agents=[] # 实例化多个智能体 for agent_i in range(NUM_AGENT): print(f"Initializing agent {agent_i}.....") agent = Agent( memo_size=MEMORY_SIZE, obs_dim=obs_dim[agent_i], state_dim= state_dim, n_agent = NUM_AGENT, action_dim = action_dim[agent_i], alpha=LR_ACTOR ,beta= LR_CRITIC, fc1_dims = HIDDEN_DIM, fc2_dims=HIDDEN_DIM, gamma = GAMMA, tau=TAU , batch_size=BATCH_SIZE) agents.append(agent) # 2. Main training loop for episode_i in range(NUM_EPISODE): multi_obs, infos = env.reset() episode_reward = 0 mlti_done = {agent_name:False for agent_name in agent_name_list} for step_i in range(NUM_STEP): total_step = episode_i*NUM_STEP+step_i # 2.1 collecting action from all agents multi_actions ={} # 用于存储动作集合 for agent_i, agent_name in enumerate(agent_name_list): agent = agents[agent_i] single_obs = multi_obs[agent_name] single_action = agent.get_action(single_obs) multi_actions[agent_name] = single_action # 2.2 executing actions, multi_next_obs, multi_reward, multi_done, multi_truncations, infos = env.step(multi_actions) state= multi_obs_to_state(multi_obs) next_state = multi_obs_to_state(multi_next_obs) if step_i >= NUM_STEP -1: multi_done = {agent_name: True for agent_name in agent_name_list} #2.3 store memory for agent_i, agent_name in enumerate(agent_name_list): agent = agents[agent_i] single_obs = multi_obs[agent_name] single_next_obs = multi_next_obs[agent_name] single_action = multi_actions[agent_name] single_reward = multi_reward[agent_name] single_done = multi_done[agent_name] # 存储到经验池中 agent.replay_buffer.add_memo(single_obs,single_next_obs,state, next_state, single_action,single_reward,single_done) #2.4 Update brain every fixed step multi_batch_obses=[] multi_batch_next_obses =[] multi_batch_states = [] multi_batch_next_states = [] multi_batch_actions = [] multi_batch_next_actions =[] multi_batch_online_actions =[] multi_batch_rewards =[] multi_batch_dones = [] #2.4.1 sample a batch of memories current_memo_size = min (MEMORY_SIZE, total_step+1) if current_memo_size < BATCH_SIZE: batch_idx = range(0, current_memo_size) else: batch_idx = np.random.choice(current_memo_size,BATCH_SIZE) for agent_i in range(NUM_AGENT): agent = agents[agent_i] batch_obses, batch_next_obses, batch_states,batch_next_state, batch_actions,batch_rewards, batch_dones = agent.replay_buffer.sample(batch_idx) batch_obses_tensor = torch.tensor(batch_obses,dtype=torch.float).to(device) batch_next_obses_tensor = torch.tensor(batch_next_obses,dtype=torch.float).to(device) batch_states_tensor = torch.tensor(batch_states,dtype=torch.float).to(device) batch_next_state_tensor = torch.tensor(batch_next_state,dtype=torch.float).to(device) batch_actions_tensor = torch.tensor(batch_actions,dtype=torch.float).to(device) batch_rewards_tensor = torch.tensor(batch_rewards,dtype=torch.float).to(device) batch_done_tensor = torch.tensor(batch_dones,dtype=torch.float).to(device) multi_batch_obses.append(batch_obses_tensor) multi_batch_next_obses.append(batch_next_obses_tensor) multi_batch_states.append(batch_states_tensor) multi_batch_next_states.append(batch_next_state_tensor) multi_batch_actions.append(batch_actions_tensor) single_batch_next_actions = agent.target_actor.forward(batch_next_obses_tensor) multi_batch_next_actions.append(single_batch_next_actions) single_batch_online_action = agent.actor.forward(batch_obses_tensor) multi_batch_online_actions.append(single_batch_online_action) multi_batch_rewards.append(batch_rewards_tensor) multi_batch_dones.append(batch_done_tensor) multi_batch_actions_tensor = torch.cat(multi_batch_actions, dim=1).to(device) multi_batch_next_actions_tensor = torch.cat(multi_batch_next_actions, dim=1).to(device) multi_batch_online_actions_tensor = torch.cat(multi_batch_online_actions, dim=1).to(device) if(total_step+1) % TARGET_UPDATE_INTERVAL == 0: for agent_i in range(NUM_AGENT): agent = agents[agent_i] batch_obses_tensor = multi_batch_obses[agent_i] batch_states_tensor = multi_batch_states[agent_i] batch_next_states_tensor = multi_batch_next_states[agent_i] batch_rewards_tensor =multi_batch_rewards [agent_i] batch_dones_tensor =multi_batch_dones [agent_i] batch_actions_tensor =multi_batch_actions [agent_i] #target critic critic_target_q = agent.target_critic.forward(batch_next_state_tensor ,multi_batch_next_actions_tensor.detach()) y = (batch_rewards_tensor + (1-batch_dones_tensor)*agent.gamma*critic_target_q).flatten() critic_q = agent.critic.forward(batch_states_tensor,multi_batch_actions_tensor.detach()).flatten() #update critic critic_loss = nn.MSELoss()(y,critic_q) agent.critic.optimizer.zero_grad() critic_loss.backward() agent.critic.optimizer.step() #update actor actor_q = agent.critic.forward(batch_states_tensor, multi_batch_online_actions_tensor.detach()).flatten() actor_loss = -torch.mean(actor_q) agent.actor.optimizer.zero_grad() actor_loss.backward() agent.actor.optimizer.step() # update target critic for target_param, param in zip(agent.target_critic.parameters(), agent.critic.parameters()): target_param.data.copy_(agent.tau * param.data+(1.0-agent.tau)*target_param.data) # update target actor for target_param, param in zip(agent.target_actor.parameters(), agent.actor.parameters()): target_param.data.copy_(agent.tau * param.data+(1.0-agent.tau)*target_param.data) multi_obs = multi_next_obs episode_reward += sum([single_reward for single_reward in multi_reward.values()]) print(f"episode reward :{episode_reward}") # 3.Render the env if(episode_i +1) % 50 == 0: env= simple_adversary_v3.parallel_env(N=2, max_cycles=NUM_STEP, continuous_actions = True, render_mode = "human") for test_epi_i in range(2): multi_obs, infos = env.reset() for step_i in range(NUM_STEP): multi_actions={} for agent_i, agent_name in enumerate(agent_name_list): agent = agents[agent_i] single_obs = multi_obs[agent_name] single_action = agent.get_action(single_obs) multi_actions[agent_name] = single_action multi_next_obs, multi_reward, multi_done, multi_truncations, infos = env.step(multi_actions) multi_obs = multi_next_obs # Save the agents if episode_i == 0: highest_reward = episode_reward if episode_reward >highest_reward: highest_reward=episode_reward print(f"Highest reward update at episode {episode_i}:{round(highest_reward,2)}") for agent_i in range(NUM_AGENT): agent = agents[agent_i] flag = os.path.exists(agent_path) if not flag: os.makedirs(agent_path) torch.save(agent.actor.state_dict(),f"models"+"\\"+"simple_adversary_v3"+"\\"+f"agent_{agent_i}_actor_{scenario}_{timestamp}.pth") env.close() maddpg_agent.py

""" #!/usr/bin/env python # -*- coding:utf-8 -*- @Project : MADDPG """ import numpy as np import torch import torch.nn as nn import torch.nn.functional as F device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") class ReplayBuffer: def __init__(self, capcity, obs_dim, state_dim, action_dim, batch_size): self.capcity = capcity self.obs_cap = np.empty((self.capcity,obs_dim)) self.next_obs_cap = np.empty((self.capcity,obs_dim)) self.state_cap = np.empty((self.capcity,state_dim)) self.next_state_cap = np.empty((self.capcity,state_dim)) self.action_cap = np.empty((self.capcity,action_dim)) self.reward_cap = np.empty((self.capcity,1)) self.done_cap = np.empty((self.capcity,1)) self.batch_batch = batch_size self.current = 0 def add_memo(self, obs, next_obs, state, next_state, action, reward, done): self.obs_cap[self.current] =obs self.next_obs_cap[self.current] =next_obs self.state_cap[self.current] =state self.next_state_cap[self.current] =next_state self.action_cap[self.current] =action self.reward_cap[self.current] =reward self.done_cap[self.current] =done self.current = (self.current + 1) % self.capcity #get one sample def sample(self,idxes): obs = self.obs_cap[idxes] next_obs = self.next_obs_cap[idxes] state = self.state_cap[idxes] next_state = self.next_state_cap[idxes] action = self.action_cap[idxes] reward = self.reward_cap[idxes] done = self.done_cap[idxes] return obs,next_obs,state,next_state,action,reward,done class Critic(nn.Module): def __init__(self, lr_critic, input_dims, fc1_dims, fc2_dims,n_agent,action_dim): super(Critic, self).__init__() self.fc1 = nn.Linear(input_dims+n_agent*action_dim,fc1_dims) self.fc2 = nn.Linear(fc1_dims,fc2_dims) self.q = nn.Linear(fc2_dims, 1) self.optimizer = torch.optim.Adam(self.parameters(),lr=lr_critic) def forward(self, state,action): x= torch.cat([state,action],dim=1) x = F.relu(self.fc1(x)) x = F.relu(self.fc2(x)) q = self.q(x) return q def save_checkpoint(self, checkpoint_file): torch.save(self.state_dict(), checkpoint_file) def load_checkpoint(self, checkpoint_file): self.load_state_dict(torch.load(checkpoint_file)) class Actor(nn.Module): def __init__(self, lr_actor, input_dims, fc1_dims, fc2_dims,action_dim): super(Actor, self).__init__() self.fc1 = nn.Linear(input_dims,fc1_dims) self.fc2 = nn.Linear(fc1_dims,fc2_dims) self.pi = nn.Linear(fc2_dims, action_dim) self.optimizer = torch.optim.Adam(self.parameters(),lr=lr_actor) def forward(self, state): x = F.relu((self.fc1(state))) x = F.relu((self.fc2(x))) mu = torch.softmax(self.pi(x), dim=1) return mu def save_checkpoint(self, checkpoint_file): torch.save(self.state_dict(), checkpoint_file) def load_checkpoint(self, checkpoint_file): self.load_state_dict(torch.load(checkpoint_file)) class Agent: def __init__(self, memo_size, obs_dim, state_dim, n_agent, action_dim, alpha ,beta, fc1_dims, fc2_dims, gamma, tau , batch_size): self.gamma = gamma self.tau = tau self.action_dim = action_dim self.actor = Actor(lr_actor=alpha, input_dims=obs_dim, fc1_dims=fc1_dims, fc2_dims=fc2_dims, action_dim=action_dim).to(device) self.critic = Critic(lr_critic=beta, input_dims=state_dim, fc1_dims=fc1_dims, fc2_dims=fc2_dims, n_agent=n_agent,action_dim=action_dim).to(device) self.target_actor = Actor(lr_actor=alpha, input_dims=obs_dim, fc1_dims=fc1_dims, fc2_dims=fc2_dims, action_dim=action_dim).to(device) self.target_critic = Critic(lr_critic=beta, input_dims=state_dim, fc1_dims=fc1_dims, fc2_dims=fc2_dims, n_agent=n_agent, action_dim=action_dim).to(device) self.replay_buffer = ReplayBuffer(capcity=memo_size, obs_dim=obs_dim, state_dim=state_dim, action_dim=action_dim, batch_size=batch_size) def get_action(self, obs): single_obs = torch.tensor(data=obs, dtype=torch.float).unsqueeze(0).to(device) single_action = self.actor.forward(single_obs) noise = torch.randn(self.action_dim).to(device)*0.2 single_action = torch.clamp(input=single_action+noise, min=0.0, max=1.0) return single_action.detach().cpu().numpy()[0] def save_model(self,filename): self.actor.save_checkpoint(filename) self.target_actor.save_checkpoint(filename) self.critic.save_checkpoint(filename) self.target_critic.save_checkpoint(filename) def load_model(self,filename): self.actor.load_checkpoint(filename) self.target_actor.load_checkpoint(filename) self.critic.load_checkpoint(filename) self.target_critic.load_checkpoint(filename)