理论课:C2W3.Auto-complete and Language Models

文章目录

理论课: C2W3.Auto-complete and Language Models

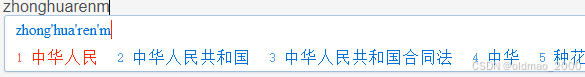

之前有学过Auto-Correct,这节学习Auto-Complete,例如:

当然,浏览器里面也有类似操作。

语言模型是自动完成系统的关键组成部分。

语言模型为单词序列分配概率,使更 “可能 ”的序列获得更高的分数。 例如

“I have a pen”

的概率要高于

“I am a pen”

可以利用这种概率计算来开发自动完成系统。

假设用户输入

“I eat scrambled”

那么你可以找到一个词x,使 “I eat scrambled x ”获得最高概率。 如果 x = “eggs”,那么句子就是

“I eat scrambled eggs”

可以选择的语言模型很多种,这里直接使用简单高效的N-grams。大概步骤为:

- 加载和预处理数据

- 加载并标记数据。

- 将句子分成训练集和测试集。

- 用未知标记

<unk>替换低频词。

- 开发基于 N-gram 的语言模型

- 计算给定数据集中的 n-grams 数量。

- 用 k 平滑估计下一个词的条件概率。

- 通过计算复杂度得分来评估 N-gram 模型。

- 使用N-gram模型为句子推荐下一个单词。

import math import random import numpy as np import pandas as pd import nltk #nltk.download('punkt') import w3_unittest nltk.data.path.append('.') 1 Load and Preprocess Data

1.1: Load the data

数据是一个长字符串,包含很多很多条微博,推文之间有一个换行符“\n”。

with open("./data/en_US.twitter.txt", "r", encoding="utf-8") as f: data = f.read() print("Data type:", type(data)) print("Number of letters:", len(data)) print("First 300 letters of the data") print("-------") display(data[0:300]) print("-------") print("Last 300 letters of the data") print("-------") display(data[-300:]) print("-------") 结果:

Data type: <class 'str'> Number of letters: 3335477 First 300 letters of the data ------- "How are you? Btw thanks for the RT. You gonna be in DC anytime soon? Love to see you. Been way, way too long.\nWhen you meet someone special... you'll know. Your heart will beat more rapidly and you'll smile for no reason.\nthey've decided its more fun if I don't.\nSo Tired D; Played Lazer Tag & Ran A " ------- Last 300 letters of the data ------- "ust had one a few weeks back....hopefully we will be back soon! wish you the best yo\nColombia is with an 'o'...“: We now ship to 4 countries in South America (fist pump). Please welcome Columbia to the Stunner Family”\n#GutsiestMovesYouCanMake Giving a cat a bath.\nCoffee after 5 was a TERRIBLE idea.\n" ------- 1.2 Pre-process the data

通过以下步骤对这些数据进行预处理:

- 用“\n ”作为分隔符将数据分割成句子。

- 将每个句子分割成标记。请注意,在英文中,我们交替使用 “token ”和 “word”。

- 将句子分配到训练集或测试集

- 查找在训练数据中至少出现 N 次的标记。

- 用

<unk>替换出现少于 N 次的词组

注意:本实验省略了验证数据。

- 在实际应用中,我们应该保留一部分数据作为验证集,并用它来调整我们的训练。

- 为简单起见,这里跳过这一过程。

Exercise 01.Split data into sentences

# UNIT TEST COMMENT: Candidate for Table Driven Tests ### UNQ_C1 GRADED_FUNCTION: split_to_sentences ### def split_to_sentences(data): """ Split data by linebreak "\n" Args: data: str Returns: A list of sentences """ ### START CODE HERE ### sentences = data.split("\n") ### END CODE HERE ### # Additional clearning (This part is already implemented) # - Remove leading and trailing spaces from each sentence # - Drop sentences if they are empty strings. sentences = [s.strip() for s in sentences] sentences = [s for s in sentences if len(s) > 0] return sentences 运行:

# test your code x = """ I have a pen.\nI have an apple. \nAh\nApple pen.\n """ print(x) split_to_sentences(x) 结果:

I have a pen.

I have an apple.

Ah

Apple pen.

PS.此处不带语音。

Exercise 02.Tokenize sentences

将所有标记词转换为小写,这样原文中大写的单词(例如句子开头的单词)就会与小写单词得到相同的处理。将每个单词列表添加到句子列表中。

# UNIT TEST COMMENT: Candidate for Table Driven Tests ### UNQ_C2 GRADED_FUNCTION: tokenize_sentences ### def tokenize_sentences(sentences): """ Tokenize sentences into tokens (words) Args: sentences: List of strings Returns: List of lists of tokens """ # Initialize the list of lists of tokenized sentences tokenized_sentences = [] ### START CODE HERE ### # Go through each sentence for sentence in sentences: # complete this line # Convert to lowercase letters sentence = sentence.lower() # Convert into a list of words tokenized = nltk.word_tokenize(sentence) # append the list of words to the list of lists tokenized_sentences.append(tokenized) ### END CODE HERE ### return tokenized_sentences 运行:

# test your code sentences = ["Sky is blue.", "Leaves are green.", "Roses are red."] tokenize_sentences(sentences) 结果:

[['sky', 'is', 'blue', '.'], ['leaves', 'are', 'green', '.'], ['roses', 'are', 'red', '.']] Exercise 03

根据前面两个练习获取分词后的数据

# UNIT TEST COMMENT: Candidate for Table Driven Tests ### UNQ_C3 GRADED_FUNCTION: get_tokenized_data ### def get_tokenized_data(data): """ Make a list of tokenized sentences Args: data: String Returns: List of lists of tokens """ ### START CODE HERE ### # Get the sentences by splitting up the data sentences = split_to_sentences(data) # Get the list of lists of tokens by tokenizing the sentences tokenized_sentences = tokenize_sentences(sentences) ### END CODE HERE ### return tokenized_sentences 测试:

# test your function x = "Sky is blue.\nLeaves are green\nRoses are red." get_tokenized_data(x) 结果:

[['sky', 'is', 'blue', '.'], ['leaves', 'are', 'green'], ['roses', 'are', 'red', '.']] Split into train and test sets

tokenized_data = get_tokenized_data(data) random.seed(87) random.shuffle(tokenized_data) train_size = int(len(tokenized_data) * 0.8) train_data = tokenized_data[0:train_size] test_data = tokenized_data[train_size:] 运行:

print("{} data are split into {} train and {} test set".format( len(tokenized_data), len(train_data), len(test_data))) print("First training sample:") print(train_data[0]) print("First test sample") print(test_data[0]) 结果:

47961 data are split into 38368 train and 9593 test set First training sample: ['i', 'personally', 'would', 'like', 'as', 'our', 'official', 'glove', 'of', 'the', 'team', 'local', 'company', 'and', 'quality', 'production'] First test sample ['that', 'picture', 'i', 'just', 'seen', 'whoa', 'dere', '!', '!', '>', '>', '>', '>', '>', '>', '>'] Exercise 04

这里不会使用数据中出现的所有分词(单词)进行训练,只使用频率较高的单词。

- 只专注于在数据中出现至少 N 次的单词。

- 先计算每个词在数据中出现的次数。

需要一个双 for 循环,一个用于句子,另一个用于句子中的标记。

# UNIT TEST COMMENT: Candidate for Table Driven Tests ### UNQ_C4 GRADED_FUNCTION: count_words ### def count_words(tokenized_sentences): """ Count the number of word appearence in the tokenized sentences Args: tokenized_sentences: List of lists of strings Returns: dict that maps word (str) to the frequency (int) """ word_counts = {} ### START CODE HERE ### # Loop through each sentence for sentence in tokenized_sentences: # complete this line # Go through each token in the sentence for token in sentence: # complete this line # If the token is not in the dictionary yet, set the count to 1 if token not in word_counts.keys(): # complete this line with the proper condition word_counts[token] = 1 # If the token is already in the dictionary, increment the count by 1 else: word_counts[token] += 1 ### END CODE HERE ### return word_counts 测试:

# test your code tokenized_sentences = [['sky', 'is', 'blue', '.'], ['leaves', 'are', 'green', '.'], ['roses', 'are', 'red', '.']] count_words(tokenized_sentences) 结果:

{'sky': 1, 'is': 1, 'blue': 1, '.': 3, 'leaves': 1, 'are': 2, 'green': 1, 'roses': 1, 'red': 1} Handling ‘Out of Vocabulary’ words

当模型遇到了一个它在训练过程中从未见过的单词,那么它将没有输入单词来帮助它确定下一个要建议的单词。模型将无法预测下一个单词,因为当前单词没有计数。

- 这种 “新 ”单词被称为 “未知单词”,或out of vocabulary(OOV)。

- 测试集中未知词的百分比称为 OOV 率。

为了在预测过程中处理未知词,可以使用一个特殊的标记 “unk”来表示所有未知词。

修改训练数据,使其包含一些 “未知 ”词来进行训练。

将在训练集中出现频率不高的词转换成 “未知”词。

创建一个训练集中出现频率最高的单词列表,称为封闭词汇表(closed vocabulary)。

将不属于封闭词汇表的所有其他单词转换为标记 “unk”。

Exercise 05

创建一个接收文本文档和阈值 count_threshold 的函数。

任何计数大于或等于阈值 count_threshold 的单词都会保留在封闭词汇表中。

函数将返回单词封闭词汇表。

# UNIT TEST COMMENT: Candidate for Table Driven Tests ### UNQ_C5 GRADED_FUNCTION: get_words_with_nplus_frequency ### def get_words_with_nplus_frequency(tokenized_sentences, count_threshold): """ Find the words that appear N times or more Args: tokenized_sentences: List of lists of sentences count_threshold: minimum number of occurrences for a word to be in the closed vocabulary. Returns: List of words that appear N times or more """ # Initialize an empty list to contain the words that # appear at least 'minimum_freq' times. closed_vocab = [] # Get the word couts of the tokenized sentences # Use the function that you defined earlier to count the words word_counts = count_words(tokenized_sentences) ### START CODE HERE ### # UNIT TEST COMMENT: Whole thing can be one-lined with list comprehension # filtered_words = None # for each word and its count for word, cnt in word_counts.items(): # complete this line # check that the word's count # is at least as great as the minimum count if cnt>=count_threshold: # complete this line with the proper condition # append the word to the list <unk> closed_vocab.append(word) ### END CODE HERE ### return closed_vocab 测试:

# test your code tokenized_sentences = [['sky', 'is', 'blue', '.'], ['leaves', 'are', 'green', '.'], ['roses', 'are', 'red', '.']] tmp_closed_vocab = get_words_with_nplus_frequency(tokenized_sentences, count_threshold=2) print(f"Closed vocabulary:") print(tmp_closed_vocab) 结果:

Closed vocabulary:

[‘.’, ‘are’]

Exercise 06

出现次数达到或超过 count_threshold 的词属于封闭词汇。

其他词均视为未知词。

用标记 <unk> 替换不在封闭词汇表中的词。

# UNIT TEST COMMENT: Candidate for Table Driven Tests ### UNQ_C6 GRADED_FUNCTION: replace_oov_words_by_unk ### def replace_oov_words_by_unk(tokenized_sentences, vocabulary, unknown_token="<unk>"): """ Replace words not in the given vocabulary with '<unk>' token. Args: tokenized_sentences: List of lists of strings vocabulary: List of strings that we will use unknown_token: A string representing unknown (out-of-vocabulary) words Returns: List of lists of strings, with words not in the vocabulary replaced """ # Place vocabulary into a set for faster search vocabulary = set(vocabulary) # Initialize a list that will hold the sentences # after less frequent words are replaced by the unknown token replaced_tokenized_sentences = [] # Go through each sentence for sentence in tokenized_sentences: # Initialize the list that will contain # a single sentence with "unknown_token" replacements replaced_sentence = [] ### START CODE HERE (Replace instances of 'None' with your code) ### # for each token in the sentence for token in sentence: # complete this line # Check if the token is in the closed vocabulary if token in vocabulary: # complete this line with the proper condition # If so, append the word to the replaced_sentence replaced_sentence.append(token) else: # otherwise, append the unknown token instead replaced_sentence.append(unknown_token) ### END CODE HERE ### # Append the list of tokens to the list of lists replaced_tokenized_sentences.append(replaced_sentence) return replaced_tokenized_sentences 测试:

tokenized_sentences = [["dogs", "run"], ["cats", "sleep"]] vocabulary = ["dogs", "sleep"] tmp_replaced_tokenized_sentences = replace_oov_words_by_unk(tokenized_sentences, vocabulary) print(f"Original sentence:") print(tokenized_sentences) print(f"tokenized_sentences with less frequent words converted to '<unk>':") print(tmp_replaced_tokenized_sentences) 结果:

Original sentence: [['dogs', 'run'], ['cats', 'sleep']] tokenized_sentences with less frequent words converted to '<unk>': [['dogs', '<unk>'], ['<unk>', 'sleep']] Exercise 07

结合已实现的函数正式处理数据。

- 查找在训练数据中至少出现过 count_threshold 次的标记。

- 用

<unk>替换训练数据和测试数据中出现次数少于 count_threshold 的标记。

# UNIT TEST COMMENT: Candidate for Table Driven Tests ### UNQ_C7 GRADED_FUNCTION: preprocess_data ### def preprocess_data(train_data, test_data, count_threshold, unknown_token="<unk>", get_words_with_nplus_frequency=get_words_with_nplus_frequency, replace_oov_words_by_unk=replace_oov_words_by_unk): """ Preprocess data, i.e., - Find tokens that appear at least N times in the training data. - Replace tokens that appear less than N times by "<unk>" both for training and test data. Args: train_data, test_data: List of lists of strings. count_threshold: Words whose count is less than this are treated as unknown. Returns: Tuple of - training data with low frequent words replaced by "<unk>" - test data with low frequent words replaced by "<unk>" - vocabulary of words that appear n times or more in the training data """ ### START CODE HERE (Replace instances of 'None' with your code) ### # Get the closed vocabulary using the train data vocabulary = get_words_with_nplus_frequency(train_data,count_threshold) # For the train data, replace less common words with "" train_data_replaced = replace_oov_words_by_unk(train_data,vocabulary,unknown_token) # For the test data, replace less common words with "" test_data_replaced = replace_oov_words_by_unk(test_data,vocabulary,unknown_token) ### END CODE HERE ### return train_data_replaced, test_data_replaced, vocabulary 测试:

# test your code tmp_train = [['sky', 'is', 'blue', '.'], ['leaves', 'are', 'green']] tmp_test = [['roses', 'are', 'red', '.']] tmp_train_repl, tmp_test_repl, tmp_vocab = preprocess_data(tmp_train, tmp_test, count_threshold = 1 ) print("tmp_train_repl") print(tmp_train_repl) print() print("tmp_test_repl") print(tmp_test_repl) print() print("tmp_vocab") print(tmp_vocab) 结果:

tmp_train_repl [['sky', 'is', 'blue', '.'], ['leaves', 'are', 'green']] tmp_test_repl [['<unk>', 'are', '<unk>', '.']] tmp_vocab ['sky', 'is', 'blue', '.', 'leaves', 'are', 'green'] 正式处理数据:

minimum_freq = 2 train_data_processed, test_data_processed, vocabulary = preprocess_data(train_data, test_data, minimum_freq) print("First preprocessed training sample:") print(train_data_processed[0]) print() print("First preprocessed test sample:") print(test_data_processed[0]) print() print("First 10 vocabulary:") print(vocabulary[0:10]) print() print("Size of vocabulary:", len(vocabulary)) 结果:

First preprocessed training sample: ['i', 'personally', 'would', 'like', 'as', 'our', 'official', 'glove', 'of', 'the', 'team', 'local', 'company', 'and', 'quality', 'production'] First preprocessed test sample: ['that', 'picture', 'i', 'just', 'seen', 'whoa', 'dere', '!', '!', '>', '>', '>', '>', '>', '>', '>'] First 10 vocabulary: ['i', 'personally', 'would', 'like', 'as', 'our', 'official', 'glove', 'of', 'the'] Size of vocabulary: 14823