理论课:C1W1.Sentiment Analysis with Logistic Regression

文章目录

理论课: C1W1.Sentiment Analysis with Logistic Regression

预处理

主要任务是完成对推文的预处理函数,主要使用NLTK软件包对 Twitter 数据集进行预处理。

导入包

import nltk # Python library for NLP from nltk.corpus import twitter_samples # sample Twitter dataset from NLTK import matplotlib.pyplot as plt # library for visualization import random # pseudo-random number generator 下载twitter_samples可以运行:

nltk.download() 或者

nltk.download('twitter_samples') 如果下载twitter_samples失败,可以到https://www.nltk.org/nltk_data/,手工下载twitter_samples.zip后放corpora目录,不用解压。

Twitter dataset简介

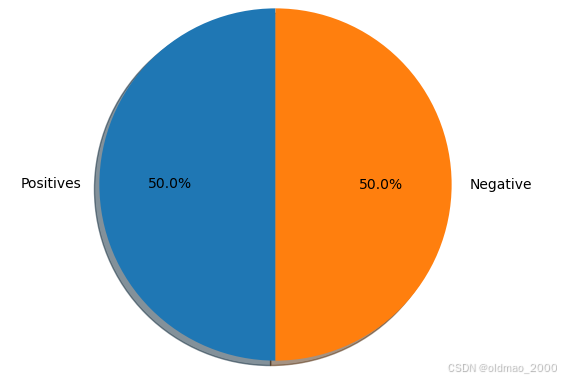

NLTK 的推特样本数据集分为正面推文和负面推文。其中包含 5000 条正面推文和 5000 条负面推文。这些类别之间的精确匹配并非巧合。这样做的目的是为了得到一个平衡的数据集。这并不能反映实时 Twitter 流中正面和负面类别的真实分布(正面大于负面)。这只是因为平衡数据集简化了情感分析所需的大多数计算方法的设计。

下载并导入成功后可以使用以下代码加载数据:

# select the set of positive and negative tweets all_positive_tweets = twitter_samples.strings('positive_tweets.json') all_negative_tweets = twitter_samples.strings('negative_tweets.json') 打印正面和负面推文的数量,打印数据集的数据结构:

print('Number of positive tweets: ', len(all_positive_tweets)) print('Number of negative tweets: ', len(all_negative_tweets)) print('\nThe type of all_positive_tweets is: ', type(all_positive_tweets)) print('The type of a tweet entry is: ', type(all_negative_tweets[0]))

数据类型是列表,列表中的推文是字符串类型,下面使用Matplotlib 的 pyplot 库进行可视化:

# Declare a figure with a custom size fig = plt.figure(figsize=(5, 5)) # labels for the two classes labels = 'Positives', 'Negative' # Sizes for each slide sizes = [len(all_positive_tweets), len(all_negative_tweets)] # Declare pie chart, where the slices will be ordered and plotted counter-clockwise: plt.pie(sizes, labels=labels, autopct='%1.1f%%', shadow=True, startangle=90) # Equal aspect ratio ensures that pie is drawn as a circle. plt.axis('equal') # Display the chart plt.show()

查看原始文本

随机打印一条正面推文和一条负面推文。代码在字符串的开头添加了一个颜色标记,以进一步区分两者(原推文会有脏话):

# print positive in greeen print('\033[92m' + all_positive_tweets[random.randint(0,5000)]) # print negative in red print('\033[91m' + all_negative_tweets[random.randint(0,5000)]) 处理原始文本

数据预处理是任何机器学习项目的关键步骤之一。它包括在将数据输入机器学习算法之前对数据进行清理和格式化。对于 NLP,预处理步骤通常包括以下任务:

分词

大/小写

删除停顿词和标点符号

词干化

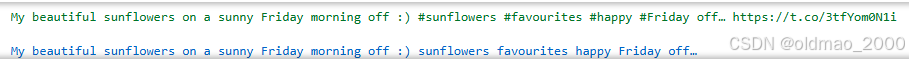

这里只选取一条推文为例,看看每一个预处理步骤是如何转换的。

# Our selected sample. Complex enough to exemplify each step tweet = all_positive_tweets[2277] print(tweet) 结果:

接下来要下载停用词:

#download the stopwords from NLTK nltk.download('stopwords') 下载失败后手工安装,下载stopwords.zip后放corpora目录

导入以下库:

import re # library for regular expression operations import string # for string operations from nltk.corpus import stopwords # module for stop words that come with NLTK from nltk.stem import PorterStemmer # module for stemming from nltk.tokenize import TweetTokenizer # module for tokenizing strings 处理超链接、Twitter 标记和样式

使用re库在推特上执行正则表达式操作。我们将定义搜索模式,并使用 sub() 方法用空字符(即 '')替换来移除匹配的字符。

print('\033[92m' + tweet) print('\033[94m') # remove old style retweet text "RT" tweet2 = re.sub(r'^RT[\s]+', '', tweet) # remove hyperlinks tweet2 = re.sub(r'https?://[^\s\n\r]+', '', tweet2) # remove hashtags # only removing the hash # sign from the word tweet2 = re.sub(r'#', '', tweet2) print(tweet2) 结果:

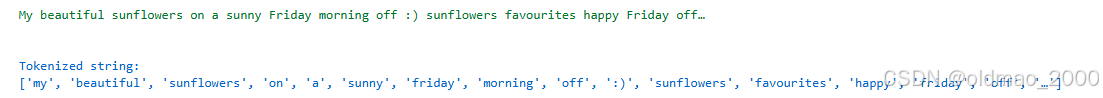

分词

使用NLTK中的tokenize模块进行操作(中文得用别的分词库):

print() print('\033[92m' + tweet2) print('\033[94m') # instantiate tokenizer class tokenizer = TweetTokenizer(preserve_case=False, strip_handles=True, reduce_len=True) # tokenize tweets tweet_tokens = tokenizer.tokenize(tweet2) print() print('Tokenized string:') print(tweet_tokens)

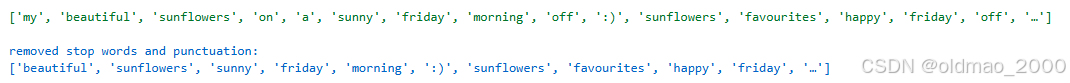

去除标点和停用词

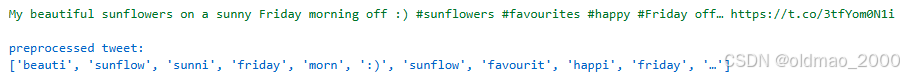

这里加载是英文停用词,也有中文专用的停用词

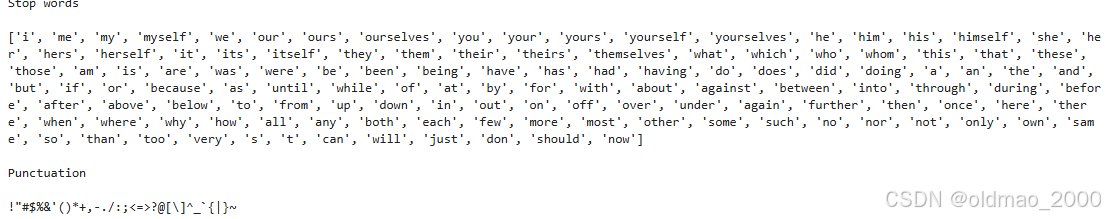

#Import the english stop words list from NLTK stopwords_english = stopwords.words('english') print('Stop words\n') print(stopwords_english) print('\nPunctuation\n') print(string.punctuation)

可以看到,上面的停用词表包含了一些在某些语境中可能很重要的词。这些词包括 i、not、between、because、won、against 等。在某些应用中,您可能需要自定义停用词列表。在我们的练习中,我们将使用整个列表。

关于标点符号,我们在前面已经提到,在处理推文时应保留某些分组,如"😃 “和”…",因为它们用于表达情绪。而在其他情况下,如医学分析,这些标点符号也应删除。

print() print('\033[92m') print(tweet_tokens) print('\033[94m') tweets_clean = [] for word in tweet_tokens: # Go through every word in your tokens list if (word not in stopwords_english and # remove stopwords word not in string.punctuation): # remove punctuation tweets_clean.append(word) print('removed stop words and punctuation:') print(tweets_clean)

词干处理

词干处理是将一个词转换成其最一般的形式或词干的过程。这有助于减少我们的词汇量。例如:

learn

learning

learned

learnt

所有这些词都是由共同词根 learn 生成的词干。然而,在某些情况下,词干拼写过程中产生的单词并不是词根的正确拼写。例如,happi 和 sunni。这是因为它为相关词选择了最常见的词干。例如,我们可以看看由不同形式的 happy 组成的词组:

happy

happiness

happier

我们可以看到,前缀 happi 更常用。这里不能选择 happ,因为它是 happen 等不相关词的词干。NLTK 有不同的词干处理模块,这里使用 PorterStemmer 模块,该模块使用波特词干处理算法。

print() print('\033[92m') print(tweets_clean) print('\033[94m') # Instantiate stemming class stemmer = PorterStemmer() # Create an empty list to store the stems tweets_stem = [] for word in tweets_clean: stem_word = stemmer.stem(word) # stemming word tweets_stem.append(stem_word) # append to the list print('stemmed words:') print(tweets_stem)

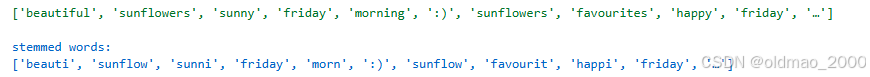

process_tweet()

将以上步骤合起来就可以得到process_tweet函数,将其写作utils.py中

import re import string import numpy as np from nltk.corpus import stopwords from nltk.stem import PorterStemmer from nltk.tokenize import TweetTokenizer def process_tweet(tweet): """Process tweet function. Input: tweet: a string containing a tweet Output: tweets_clean: a list of words containing the processed tweet """ stemmer = PorterStemmer() stopwords_english = stopwords.words('english') # remove stock market tickers like $GE tweet = re.sub(r'\$\w*', '', tweet) # remove old style retweet text "RT" tweet = re.sub(r'^RT[\s]+', '', tweet) # remove hyperlinks tweet = re.sub(r'https?://[^\s\n\r]+', '', tweet) # remove hashtags # only removing the hash # sign from the word tweet = re.sub(r'#', '', tweet) # tokenize tweets tokenizer = TweetTokenizer(preserve_case=False, strip_handles=True, reduce_len=True) tweet_tokens = tokenizer.tokenize(tweet) tweets_clean = [] for word in tweet_tokens: if (word not in stopwords_english and # remove stopwords word not in string.punctuation): # remove punctuation # tweets_clean.append(word) stem_word = stemmer.stem(word) # stemming word tweets_clean.append(stem_word) return tweets_clean 下面是调用示例:

from utils import process_tweet # Import the process_tweet function # choose the same tweet tweet = all_positive_tweets[2277] print() print('\033[92m') print(tweet) print('\033[94m') # call the imported function tweets_stem = process_tweet(tweet); # Preprocess a given tweet print('preprocessed tweet:') print(tweets_stem) # Print the result

词频构建与可视化

导入包

import nltk # Python library for NLP from nltk.corpus import twitter_samples # sample Twitter dataset from NLTK import matplotlib.pyplot as plt # visualization library import numpy as np # library for scientific computing and matrix operations # import our convenience functions from utils import process_tweet, build_freqs process_tweet函数上节已经讲过,这里直接导入了build_freqs,其目的是构建一个频率字典,该字典将每个(单词,情感)对映射到其出现的频率。具体看后面

加载数据集

# select the lists of positive and negative tweets all_positive_tweets = twitter_samples.strings('positive_tweets.json') all_negative_tweets = twitter_samples.strings('negative_tweets.json') # concatenate the lists, 1st part is the positive tweets followed by the negative tweets = all_positive_tweets + all_negative_tweets # let's see how many tweets we have print("Number of tweets: ", len(tweets)) 结果:

Number of tweets: 10000

我们将建立一个标签数组,与推文的情绪标签相匹配。其工作原理与普通列表类似,但针对计算和操作进行了优化。标签数组将由 10000 个元素组成。前 5000 个元素将填入表示正面情绪的 1 标签,后 5000 个元素将填入表示反面情绪的 0 标签。

np.ones() - 创建一个 1 的数组

np.zeros() - 创建 0 的数组

np.append() - 连接数组

# make a numpy array representing labels of the tweets labels = np.append(np.ones((len(all_positive_tweets))), np.zeros((len(all_negative_tweets)))) 字典

在 Python 中,字典是一个可变的索引集合。它以键值对的形式存储条目,并使用哈希表来实现查找(查找时间与数量大小基本无关)。在 NLP 中,字典是必不可少的,因为它可以快速检索项目或进行包含检查,即使集合中有成千上万的条目。

字典实例

dictionary = {'key1': 1, 'key2': 2} 以上代码定义了一个有两个条目的字典。键和值几乎可以是任何类型(对键有一些限制),在本例中,我们使用了字符串。我们还可以使用浮点数、整数、元组等。

添加或编辑词条

可以使用方括号在字典中插入新条目。如果字典中已包含指定的键,则其值将被覆盖。

# Add a new entry dictionary['key3'] = -5 # Overwrite the value of key1 dictionary['key1'] = 0 print(dictionary) 结果:

{‘key1’: 0, ‘key2’: 2, ‘key3’: -5}

访问值和查找键

执行字典查询和检索是 NLP 中的常见任务。有两种方法可以做到这一点:

- 使用方括号符号: 如果查找键在字典中,则允许使用这种形式。否则会产生错误。

- 使用 get() 方法: 如果字典键不存在,我们可以使用这种方法设置默认值。

# Square bracket lookup when the key exist print(dictionary['key2']) 使用方括号的时候如果访问不存在的键则会产生error,因此,需要在访问前加上if判断,或者使用get方法,并给出访问不存在键值的返回值:

# This prints a value if 'key1' in dictionary: print("item found: ", dictionary['key1']) else: print('key1 is not defined') # Same as what you get with get print("item found: ", dictionary.get('key1', -1)) 词频字典

def build_freqs(tweets, ys): """Build frequencies. Input: tweets: a list of tweets ys: an m x 1 array with the sentiment label of each tweet (either 0 or 1) Output: freqs: a dictionary mapping each (word, sentiment) pair to its frequency """ # 将numpy数组转换为列表,因为zip函数需要可迭代的对象。 # 使用squeeze函数确保ys是一个一维数组,如果ys已经是列表,这个操作没有影响。 yslist = np.squeeze(ys).tolist() # 初始化一个空字典,用于存储单词和情感标签的频率。 freqs = {} # 遍历每个情感标签和对应的推文。 for y, tweet in zip(yslist, tweets): # 对每个推文进行处理,得到单词列表。 for word in process_tweet(tweet): # 创建一个单词和情感标签的元组。 pair = (word, y) # 如果这个元组已经在字典中,就增加其计数。 if pair in freqs: freqs[pair] += 1 # 如果这个元组不在字典中,就将其添加到字典中,并设置计数为1。 else: freqs[pair] = 1 # 返回构建好的频率字典。 return freqs 以上代码对元组是否在字典中的判断部分可以修改为get函数:

for y, tweet in zip(yslist, tweets): for word in process_tweet(tweet): pair = (word, y) freqs[pair] = freqs.get(pair, 0) + 1 从上面代码可以看到,字典的每个键都是一个包含两个元素的元组,其中包含一个(word, y)对。word 是已处理推文中的一个元素,而 y 则是代表语料库的一个整数: 1 代表正面推文,0 代表负面推文。与该键相关的值是该词在指定语料库中出现的次数。例如

# "followfriday" appears 25 times in the positive tweets ('followfriday', 1.0): 25 # "shame" appears 19 times in the negative tweets ('shame', 0.0): 19 根据推文数据创建词频字典,并打印出字典的数据类型和长度:

# create frequency dictionary freqs = build_freqs(tweets, labels) # check data type print(f'type(freqs) = {type(freqs)}') # check length of the dictionary print(f'len(freqs) = {len(freqs)}') 结果:

type(freqs) = <class ‘dict’>

len(freqs) = 13234

词频表格化

选择一小部分需要可视化的词:

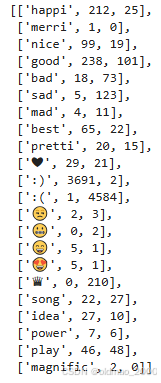

# select some words to appear in the report. we will assume that each word is unique (i.e. no duplicates) keys = ['happi', 'merri', 'nice', 'good', 'bad', 'sad', 'mad', 'best', 'pretti', '❤', ':)', ':(', '😒', '😬', '😄', '😍', '♛', 'song', 'idea', 'power', 'play', 'magnific'] # list representing our table of word counts. # each element consist of a sublist with this pattern: [<word>, <positive_count>, <negative_count>] data = [] # loop through our selected words for word in keys: # initialize positive and negative counts pos = 0 neg = 0 # retrieve number of positive counts if (word, 1) in freqs: pos = freqs[(word, 1)] # retrieve number of negative counts if (word, 0) in freqs: neg = freqs[(word, 0)] # append the word counts to the table data.append([word, pos, neg]) data

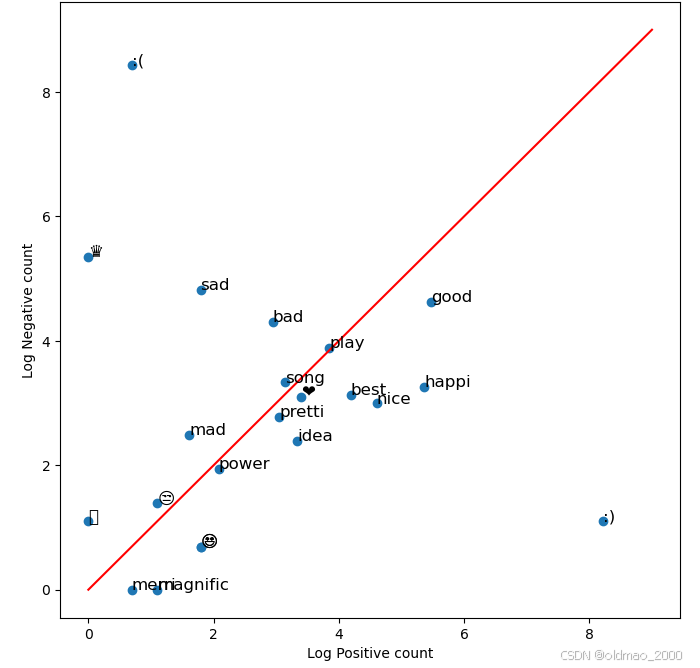

然后,我们可以使用散点图来直观地查看该表。我们将不绘制原始计数,而是绘制对数刻度,以考虑原始计数之间的巨大差异(例如☺在正区域有 3691 个计数,而在负区域只有 2 个计数)。红线是正负区域的分界线。靠近红线的词可归类为中性词。

fig, ax = plt.subplots(figsize = (8, 8)) # convert positive raw counts to logarithmic scale. we add 1 to avoid log(0) x = np.log([x[1] + 1 for x in data]) # do the same for the negative counts y = np.log([x[2] + 1 for x in data]) # Plot a dot for each pair of words ax.scatter(x, y) # assign axis labels plt.xlabel("Log Positive count") plt.ylabel("Log Negative count") # Add the word as the label at the same position as you added the points just before for i in range(0, len(data)): ax.annotate(data[i][0], (x[i], y[i]), fontsize=12) ax.plot([0, 9], [0, 9], color = 'red') # Plot the red line that divides the 2 areas. plt.show()

从图中够可以看到,最能表现情感的两个数据是笑脸和哭脸,因此对于情感分析来说,这些标点最好是保留。

逻辑回归模型可视化

导入包

import nltk # NLP toolbox from os import getcwd import pandas as pd # Library for Dataframes from nltk.corpus import twitter_samples import matplotlib.pyplot as plt # Library for visualization import numpy as np # Library for math functions from utils import process_tweet, build_freqs # Our functions for NLP 加载推特数据集

# select the set of positive and negative tweets all_positive_tweets = twitter_samples.strings('positive_tweets.json') all_negative_tweets = twitter_samples.strings('negative_tweets.json') tweets = all_positive_tweets + all_negative_tweets ## Concatenate the lists. labels = np.append(np.ones((len(all_positive_tweets),1)), np.zeros((len(all_negative_tweets),1)), axis = 0) # split the data into two pieces, one for training and one for testing (validation set) train_pos = all_positive_tweets[:4000] train_neg = all_negative_tweets[:4000] train_x = train_pos + train_neg print("Number of tweets: ", len(train_x)) 加载特征(情感词频)

将每个推文的词频特征保存在logistic_features.csv中,直接加载即可:

推文的词频特征计算方式看这里:C1W1.Sentiment Analysis with Logistic Regression

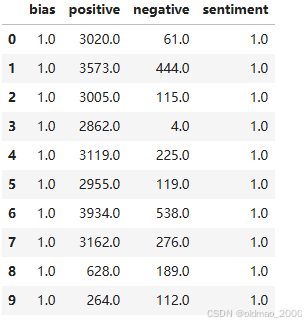

data = pd.read_csv('./data/logistic_features.csv'); # Load a 3 columns csv file using pandas function data.head(10) # Print the first 10 data entries

接下来去掉dataframe,保留np数组:

# Each feature is labeled as bias, positive and negative X = data[['bias', 'positive', 'negative']].values # Get only the numerical values of the dataframe Y = data['sentiment'].values; # Put in Y the corresponding labels or sentiments print(X.shape) # Print the shape of the X part print(X) # Print some rows of X 加载预训练的逻辑回归模型

其实就是三个参数 θ \theta θ:

theta = [6.03518871e-08, 5.38184972e-04, -5.58300168e-04] 样本散点图

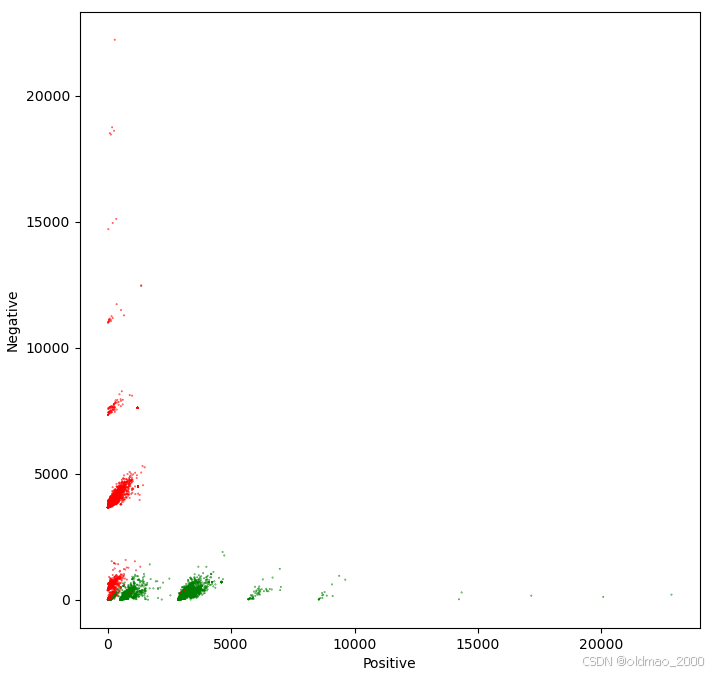

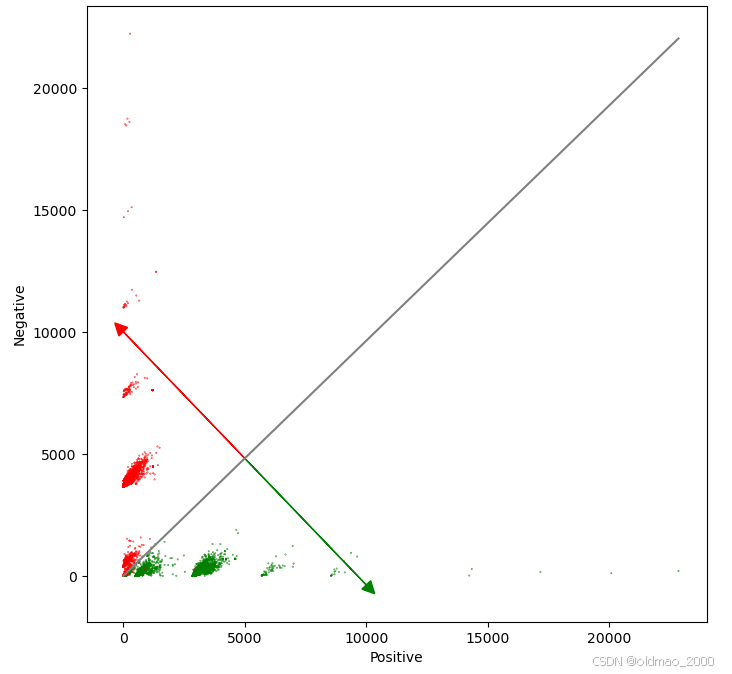

向量 Theta 代表一个平面,它将我们的特征空间分成两个部分。位于该平面上方的样本被视为正样本,位于该平面下方的样本被视为负样本。结果一个 3D 特征空间,也就是说,每条推文都是由三个值组成的向量: 偏置、正求和、负求和]",其中 “偏置 = 1”。

如果忽略偏置项,我们就可以用 "正和值 "和 "负和值 "在笛卡尔平面上绘制每条推文。积极的推文为绿色,消极的推文为红色。

# Plot the samples using columns 1 and 2 of the matrix fig, ax = plt.subplots(figsize = (8, 8)) colors = ['red', 'green'] # Color based on the sentiment Y ax.scatter(X[:,1], X[:,2], c=[colors[int(k)] for k in Y], s = 0.1) # Plot a dot for each pair of words plt.xlabel("Positive") plt.ylabel("Negative")

绘制逻辑回归模型

从上图可以看到正负样本分界非常明显,我们将画一条灰线来表示正负区域的分界线:

z = θ ∗ x = 0. z = \theta * x = 0. z=θ∗x=0.

要画出这条线,我们必须用其中一个自变量来求解上述方程。

z = θ ∗ x = 0 z = \theta * x = 0 z=θ∗x=0

x = [ 1 , p o s , n e g ] x = [1, pos, neg] x=[1,pos,neg]

z ( θ , x ) = θ 0 + θ 1 ∗ p o s + θ 2 ∗ n e g = 0 z(\theta, x) = \theta_0+ \theta_1 * pos + \theta_2 * neg = 0 z(θ,x)=θ0+θ1∗pos+θ2∗neg=0

n e g = ( − θ 0 − θ 1 ∗ p o s ) / θ 2 neg = (-\theta_0 - \theta_1 * pos) / \theta_2 neg=(−θ0−θ1∗pos)/θ2

指向相应情感方向的红线和绿线是用一条垂直于前面公式中计算出的分离线(负函数)的线来计算的。它必须与 Logit 函数的导数指向相同的方向,但大小可能不同。这只是为了直观地表示模型。

d i r e c t i o n = p o s ∗ θ 2 / θ 1 direction = pos * \theta_2 / \theta_1 direction=pos∗θ2/θ1

代码如下:

# Equation for the separation plane # It give a value in the negative axe as a function of a positive value # f(pos, neg, W) = w0 + w1 * pos + w2 * neg = 0 # s(pos, W) = (-w0 - w1 * pos) / w2 def neg(theta, pos): return (-theta[0] - pos * theta[1]) / theta[2] # Equation for the direction of the sentiments change # We don't care about the magnitude of the change. We are only interested # in the direction. So this direction is just a perpendicular function to the # separation plane # df(pos, W) = pos * w2 / w1 def direction(theta, pos): return pos * theta[2] / theta[1] # Plot the samples using columns 1 and 2 of the matrix fig, ax = plt.subplots(figsize = (8, 8)) colors = ['red', 'green'] # Color base on the sentiment Y ax.scatter(X[:,1], X[:,2], c=[colors[int(k)] for k in Y], s = 0.1) # Plot a dot for each pair of words plt.xlabel("Positive") plt.ylabel("Negative") # Now lets represent the logistic regression model in this chart. maxpos = np.max(X[:,1]) offset = 5000 # The pos value for the direction vectors origin # Plot a gray line that divides the 2 areas. ax.plot([0, maxpos], [neg(theta, 0), neg(theta, maxpos)], color = 'gray') # Plot a green line pointing to the positive direction ax.arrow(offset, neg(theta, offset), offset, direction(theta, offset), head_width=500, head_length=500, fc='g', ec='g') # Plot a red line pointing to the negative direction ax.arrow(offset, neg(theta, offset), -offset, -direction(theta, offset), head_width=500, head_length=500, fc='r', ec='r') plt.show()

图中绿线指向 z > 0 的方向,红线指向 z < 0 的方向。