阅读量:3

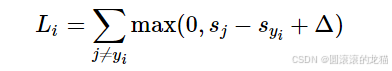

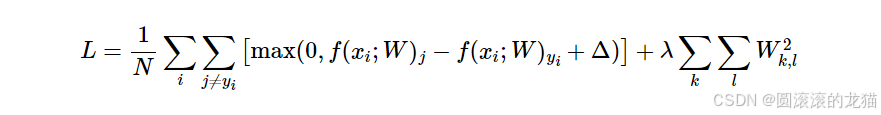

(SVM) 损失。SVM 损失的设置是,SVM“希望”每个图像的正确类别的得分比错误类别高出一定幅度Δ。

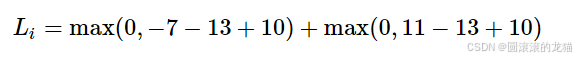

即假设有一个分数集合s=[13,−7,11]

如果y0为真实值,超参数为10,则该损失值为

超参数是指在机器学习算法的训练过程中需要设置的参数,它们不同于模型本身的参数(例如权重和偏置),是需要在训练之前预先确定的。超参数在模型训练和性能优化中起着关键作用。

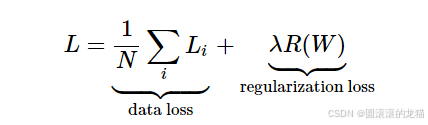

正则化

def L_i(x, y, W): """ unvectorized version. Compute the multiclass svm loss for a single example (x,y) - x is a column vector representing an image (e.g. 3073 x 1 in CIFAR-10) with an appended bias dimension in the 3073-rd position (i.e. bias trick) - y is an integer giving index of correct class (e.g. between 0 and 9 in CIFAR-10) - W is the weight matrix (e.g. 10 x 3073 in CIFAR-10) """ delta = 1.0 # see notes about delta later in this section scores = W.dot(x) # scores becomes of size 10 x 1, the scores for each class correct_class_score = scores[y] D = W.shape[0] # number of classes, e.g. 10 loss_i = 0.0 for j in range(D): # iterate over all wrong classes if j == y: # skip for the true class to only loop over incorrect classes continue # accumulate loss for the i-th example loss_i += max(0, scores[j] - correct_class_score + delta) return loss_i def L_i_vectorized(x, y, W): """ A faster half-vectorized implementation. half-vectorized refers to the fact that for a single example the implementation contains no for loops, but there is still one loop over the examples (outside this function) """ delta = 1.0 scores = W.dot(x) # compute the margins for all classes in one vector operation margins = np.maximum(0, scores - scores[y] + delta) # on y-th position scores[y] - scores[y] canceled and gave delta. We want # to ignore the y-th position and only consider margin on max wrong class margins[y] = 0 loss_i = np.sum(margins) return loss_i def L(X, y, W): """ fully-vectorized implementation : - X holds all the training examples as columns (e.g. 3073 x 50,000 in CIFAR-10) - y is array of integers specifying correct class (e.g. 50,000-D array) - W are weights (e.g. 10 x 3073) """ # evaluate loss over all examples in X without using any for loops # left as exercise to reader in the assignment